- Community Home

- >

- Storage

- >

- Around the Storage Block

- >

- An explanation of IOPS and latency

Categories

Company

Local Language

Forums

Discussions

Forums

- Data Protection and Retention

- Entry Storage Systems

- Legacy

- Midrange and Enterprise Storage

- Storage Networking

- HPE Nimble Storage

Discussions

Discussions

Discussions

Forums

Discussions

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

- BladeSystem Infrastructure and Application Solutions

- Appliance Servers

- Alpha Servers

- BackOffice Products

- Internet Products

- HPE 9000 and HPE e3000 Servers

- Networking

- Netservers

- Secure OS Software for Linux

- Server Management (Insight Manager 7)

- Windows Server 2003

- Operating System - Tru64 Unix

- ProLiant Deployment and Provisioning

- Linux-Based Community / Regional

- Microsoft System Center Integration

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Community

Resources

Forums

Blogs

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Receive email notifications

- Printer Friendly Page

- Report Inappropriate Content

An explanation of IOPS and latency

(cross-posted at my recoverymonkey.org blog)

I understand this extremely long post is redundant for seasoned storage performance pros – however, these subjects come up so frequently, that I felt compelled to write something. Plus, even the seasoned pros don’t seem to get it sometimes…

IOPS: Possibly the most common measure of storage system performance.

IOPS means Input/Output (operations) Per Second. Seems straightforward. A measure of work vs time (not the same as MB/s, which is actually easier to understand – simply, MegaBytes per Second).

How many of you have seen storage vendors extolling the virtues of their storage by using large IOPS numbers to illustrate a performance advantage?

How many of you decide on storage purchases and base your decisions on those numbers?

However: how many times has a vendor actually specified what they mean when they utter “IOPS”?

For the impatient, I’ll say this: IOPS numbers by themselves are meaningless and should be treated as such. Without additional metrics such as latency, read vs write % and I/O size (to name a few), an IOPS number is useless.

And now, let’s elaborate… (and, as a refresher regarding the perils of ignoring such things when it comes to sizing, you can always go back here).

One hundred billion IOPS…

I've competed with various vendors that promise customers high IOPS numbers.

On a small system with under 100 standard 15K RPM spinning disks, a certain three-letter vendor was claiming half a million IOPS. Another, a million. Of course, my customer was impressed, since that was far, far higher than the number I was providing. But what’s reality?

Here, I’ll do one right now: a single SSD can do a million IOPS. Maybe even two million.

Go ahead, prove otherwise.

It’s impossible, since there is no standard way to measure IOPS, and the official definition of IOPS (operations per second) does not specify certain extremely important parameters. By doing any sort of I/O test on the box, you are automatically imposing your benchmark’s definition of IOPS for that specific test.

What’s an operation? What kind of operations are there?

It can get complicated.

An I/O operation is simply some kind of work the disk subsystem has to do at the request of a host and/or some internal process. Typically a read or a write, with sub-categories (for instance read, re-read, write, re-write, random, sequential) and a size.

Depending on the operation, its size could range anywhere from bytes to kilobytes to several megabytes.

Now consider the following most assuredly non-comprehensive list of operation types:

- A random 4KB read

- A random 4KB read followed by more 4KB reads of blocks in logical adjacency to the first

- A 512-byte metadata lookup and subsequent update

- A 256KB read followed by more 256KB reads of blocks in logical sequence to the first

- A 64MB read

- A series of random 8KB writes followed by 256KB sequential reads of the same data that was just written

- Random 8KB overwrites

- Random 32KB reads and writes

- Combinations of the above in a single thread

- Combinations of the above in multiple threads

…this could go on. As you can see, there’s a large variety of I/O types, and true multi-host I/O is almost never of a single type. Virtualization further mixes up the I/O patterns, too.

Now here comes the biggest point (if you can remember one thing from this post, this should be it): No storage system can do the same maximum number of IOPS irrespective of I/O type, latency and size.

Let’s re-iterate: It is impossible for a storage system to sustain the same peak IOPS number when presented with different I/O types and latency requirements.

Another way to see the limitation…

A gross oversimplification might help prove the point that the type and size of operation you do matters when it comes to IOPS. Meaning that a system that can do a million 512-byte IOPS can’t necessarily do a million 256K IOPS.

Imagine a bucket, or a shotshell, or whatever container you wish. Imagine in this container you have either:

- A few large spheres or…

- Many tiny spheres

The bucket ultimately contains about the same volume of stuff either way, and the volume is the major limiting factor. Clearly, you can’t completely fill that same container with the same number of large spheres as you can with small spheres.

They kinda look like shotshells, don’t they?

Now imagine the little spheres being forcibly evacuated rapildy out of one end… which takes us to…

Latency matters

So, we’ve established that not all IOPS are the same – but what is of far more significance is latency as it relates to the IOPS.

If you want to read no further – never accept an IOPS number that doesn’t come with latency figures, in addition to the I/O sizes and read/write percentages.

Simply speaking, latency is a measure of how long it takes for a single I/O request to happen from the application’s viewpoint.

In general, when it comes to data storage, high latency is just about the least desirable trait, right up there with poor reliability.

Databases especially are very sensitive with respect to latency – DBs make several kinds of requests that need to be acknowledged quickly (ideally in under 10ms, and writes especially in well under 5ms). In particular, the redo log writes need to be acknowledged almost instantaneously for a heavy-write DB – under 1ms is preferable.

High sustained latency in a mission-critical app can have a nasty compounding effect – if a DB can’t write to its redo log fast enough for a single write, everything stalls until that write can complete, then moves on. However, if it constantly can’t write to its redo log fast enough, the user experience will be unacceptable as requests get piled up – the DB may be a back-end to a very busy web front-end for doing Internet sales, for example.

A delay in the DB will make the web front-end also delay, and the company could well lose thousands of customers and millions of dollars while the delay is happening. Some companies could also face penalties if they cannot meet certain SLAs.

On the other hand, applications doing sequential, throughput-driven I/O (like backup or archival) are nowhere near as sensitive to latency (and typically don’t need high IOPS anyway, but rather need high MB/s).

It follows that not all I/O sizes and I/O operations are subject to the same latency requirements.

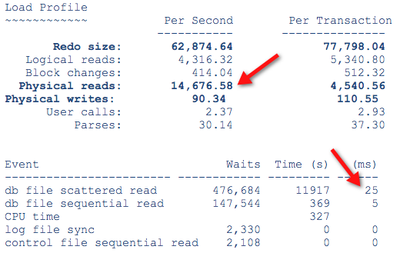

Here’s an example from an Oracle DB – a system doing about 15,000 IOPS at 25ms latency. Doing more IOPS would be nice but the DB needs the latency to go a lot lower in order to see significantly improved performance – notice the increased IO waits and latency, and that the top event causing the system to wait is I/O:

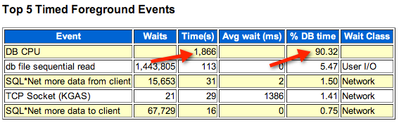

Now compare to this system (different format for this data but you’ll get the point):

Notice that, in this case, the system is waiting primarily for CPU, not storage.

A significant amount of I/O wait is a good way to determine if storage is an issue (there can be other latencies outside the storage of course – CPU and network are a couple of usual suspects). Even with good latencies, if you see a lot of I/O waits it means that the application would like faster speeds from the storage system. But this post is not meant to be a DB sizing class.

Here’s the important bit that I think is confusing a lot of people and is allowing vendors to get away with unrealistic performance numbers:

It is possible (but not desirable) to have high IOPS and high latency simultaneously.

How? Here’s a, once again, oversimplified example:Imagine 2 different cars, both with a top speed of 150mph.

- Car #1 takes 50 seconds to reach 150mph

- Car #2 takes 200 seconds to reach 150mph

The maximum speed of the two cars is identical.

Does anyone have any doubt as to which car is actually faster? Car #1 indeed feels about 4 times faster than Car #2, even though they both hit the exact same top speed in the end.

Let’s take it an important step further, keeping the car analogy since it’s very relatable to most people (but mostly because I like cars):

- Car #1 has a maximum speed of 120mph and takes 30 seconds to hit 120mph

- Car #2 has a maximum speed of 180mph, takes 50 seconds to hit 120mph, and takes 200 seconds to hit 180mph

In this example, Car #2 actually has a much higher top speed than Car #1. Many people, looking at just the top speed, might conclude it’s the faster car.

However, Car #1 reaches its top speed (120mph) far faster than Car # 2 reaches that same top speed of Car #1 (120mph).

Car #2 continues to accelerate (and, eventually, overtakes Car #1), but takes an inordinately long amount of time to hit its top speed of 180mph.

Again – which car do you think would feel faster to its driver?

You know – the feeling of pushing the gas pedal and the car immediately responding with extra speed that can be felt? Without a large delay in that happening?

Which car would get more real-world chances of reaching high speeds in a timely fashion? For instance, overtaking someone quickly and safely?

Which is why car-specific workload benchmarks like the quarter mile were devised: How many seconds does it take to traverse a quarter mile (the workload), and what is the speed once the quarter mile has been reached?

(I fully expect fellow geeks to break out the slide rules and try to prove the numbers wrong, probably factoring in gearing, wind and rolling resistance – it’s just an example to illustrate the difference between throughput and latency, I had no specific cars in mind… really).

And, finally, some more storage-related examples…

Some vendor claims… and the fine print explaining the more plausible scenario beneath each claim:

- “Mr. Customer, our box can do a million IOPS!”…512-byte ones, sequentially out of cache.

- “Mr. Customer, our box can do a quarter million random 4K IOPS – and not from cache!”…at 50ms latency.

- “Mr. Customer, our box can do a quarter million 8K IOPS, not from cache, at 20ms latency!”…but only if you have 5000 threads going in parallel.

- “Mr. Customer, our box can do a hundred thousand 4K IOPS, at under 20ms latency!”…but only if you have a single host hitting the storage so the array doesn’t get confused by different I/O from other hosts.

Notice how none of these claims are talking about writes or working set sizes… or the configuration required to support the claim.

What to look for when someone is making a grandiose IOPS claim

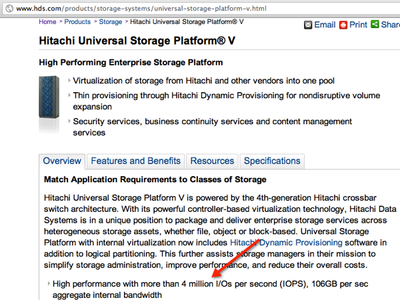

Audited validation and a specific workload to be measured against (that includes latency as a metric) both help. I’ll pick on HDS since they habitually show baffling numbers in marketing literature.

For example, from their website: (link may or may not be live when you read this, hence the screenshot)

It’s pretty much the textbook case of unqualified IOPS claims. No information as to the I/O size, reads vs writes, sequential or random, what type of medium the IOPS are coming from, or, of course, the latency…

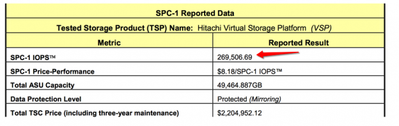

However, that very same box almost makes 270,000 SPC-1 IOPS with good latency in the audited SPC-1 benchmark:

Last I checked, 270,000 was almost 15 times less than 4,000,000. Don’t get me wrong, 260,000 low-latency IOPS is a great SPC-1 result, but it’s not 4 million SPC-1 IOPS.

Where are the IOPS coming from?

So, when you hear those big numbers, where are they really coming from? Are they just ficticious? Not necessarily. So far, here are just a few of the ways I’ve seen vendors claim IOPS prowess:

- What the controller will theoretically do given unlimited back-end resources.

- What the controller will do purely from cache.

- What a controller that can compress data will do with all zero data.

- What the controller will do assuming the data is at the FC port buffers (“huh?” is the right reaction, only one three-letter vendor ever did this so at least it’s not a widespread practice).

- What the controller will do given the configuration actually being proposed driving a very specific application workload with a specified latency threshold and real data.

The figures provided by the approaches above are all real, in the context of how the test was done by each vendor and how they define “IOPS”. However, of the (non-exhaustive) options above, which one do you think is the more realistic when it comes to dealing with real application data?

What if someone proves to you a big IOPS number at a PoC or demo?

Proof-of-Concept engagements or demos are great ways to prove performance claims.

But, as with everything, garbage in – garbage out.

If someone shows you IOmeter doing baffling IOPS, use the information in this post to help you at least find out what the exact configuration of the benchmark is. What’s the block size, is it random, sequential, a mix, how many hosts are doing I/O, the concurrency, etc. Is the speed all coming out of RAM?

Typically, things like IOmeter can be a good demo but that doesn’t mean the combined I/O of all your applications’ performance follows the same parameters, nor does it mean the few servers hitting the storage at the demo are representative of your server farm with 100x the number of servers. Testing with as close to your application workload as possible is preferred. Don’t assume you can extrapolate – systems don’t always scale linearly.

Factors affecting storage system performance

In real life, you typically won’t have a single host pumping I/O into a storage array. More likely, you will have many hosts doing I/O in parallel. Here are just some of the factors that can affect storage system performance in a major way:

- Not enough concurrency/threads.

- Controller, CPU, memory, interlink counts, speeds and types.

- A lot of random writes. This is the big one, since, depending on RAID level, the back-end I/O overhead could be anywhere from 2 I/Os (RAID 10) to 6 I/Os (RAID6) per write, unless some advanced form of write management is employed.

- Uniform latency requirements – certain systems will exhibit latency spikes from time to time, even if they’re SSD-based (sometimes especially if they’re SSD-based).

- A lot of writes to the same logical disk area. This, even with autotiering systems or giant caches, still results in tremendous load on a rather limited set of disks (whether they be spinning or SSD).

- The storage type used and the amount – different types of media have very different performance characteristics, even within the same family (the performance between SSDs can vary wildly, for example).

- CDP tools for local protection – sometimes this can result in 3x the I/O to the back-end for the writes.

- Copy on First Write snapshot algorithms with heavy write workloads.

- Misalignment.

- Heavy use of space efficiency techniques such as compression and deduplication.

- Heavy reliance on autotiering (resulting in the use of too few disks and/or too many slow disks in an attempt to save costs).

- Insufficient cache with respect to the working set coupled with inefficient cache algorithms, too-large cache block size and poor utilization.

- Shallow port queue depths.

- Inability to properly deal with different kinds of I/O from more than a few hosts.

- Inability to recognize per-stream patterns (for example, multiple parallel table scans in a Database).

- Inability to intelligently prefetch data.

What you can do to get a solution that will work…

You should work with your storage vendor to figure out, at a minimum, the items in the following list, and, after you’ve done so, go through the sizing with them and see the sizing tools being used in front of you. (You can also refer to this guide).

- Applications being used and size of each (and, ideally, performance logs from each app)

- Number of servers

- Desired backup and replication methods

- Random read and write I/O size per app

- Sequential read and write I/O size per app

- The percentages of read vs write for each app and each I/O type

- The working set (amount of data “touched”) per app

- Whether features such as thin provisioning, pools, CDP, autotiering, compression, dedupe, snapshots and replication will be utilized, and what overhead they add to the performance

- The RAID type (R10 has an impact of 2 I/Os per random write, R5 4 I/Os, R6 6 I/Os – is that being factored?)

- The impact of all those things to the overall headroom and performance of the array.

If your vendor is unwilling or unable to do this type of work, or, especially, if they tell you it doesn’t matter and that their box will deliver umpteen billion IOPS – well, at least now you know better.

D

- Back to Blog

- Newer Article

- Older Article

- haniff on: High-performance, low-latency networks for edge an...

- StorageExperts on: Configure vSphere Metro Storage Cluster with HPE N...

- haniff on: Need for speed and efficiency from high performanc...

- haniff on: Efficient networking for HPE’s Alletra cloud-nativ...

- CalvinZito on: What’s new in HPE SimpliVity 4.1.0

- MichaelMattsson on: HPE CSI Driver for Kubernetes v1.4.0 with expanded...

- StorageExperts on: HPE Nimble Storage dHCI Intelligent 1-Click Update...

- ORielly on: Power Loss at the Edge? Protect Your Data with New...

- viraj h on: HPE Primera Storage celebrates one year!

- Ron Dharma on: Introducing Language Bindings for HPE SimpliVity R...