- Community Home

- >

- Storage

- >

- Around the Storage Block

- >

- Driving data center transformation and innovation ...

Categories

Company

Local Language

Forums

Discussions

Forums

- Data Protection and Retention

- Entry Storage Systems

- Legacy

- Midrange and Enterprise Storage

- Storage Networking

- HPE Nimble Storage

Discussions

Forums

Discussions

Discussions

Forums

Discussions

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

- BladeSystem Infrastructure and Application Solutions

- Appliance Servers

- Alpha Servers

- BackOffice Products

- Internet Products

- HPE 9000 and HPE e3000 Servers

- Networking

- Netservers

- Secure OS Software for Linux

- Server Management (Insight Manager 7)

- Windows Server 2003

- Operating System - Tru64 Unix

- ProLiant Deployment and Provisioning

- Linux-Based Community / Regional

- Microsoft System Center Integration

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Community

Resources

Forums

Blogs

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Receive email notifications

- Printer Friendly Page

- Report Inappropriate Content

Driving data center transformation and innovation for AI, Machine Learning and Data Analytics

Learn what makes HPE StoreFabric M-Series Ethernet switches the best networking solution on the market today for high-performance workloads in your state-of-the-art data center.

Analysts agree that within the next two years, 25/50 and 100Gb Ethernet speeds are expected to surpass all other Ethernet solutions as the most deployed Ethernet bandwidth. This trend is being driven by mounting demands for host-side bandwidth as data center densities increase and pressure grows for switching capacities to keep pace. More than just bandwidth, 25GbE technology is helping to drive better cost efficiencies in capital and operating expenses compared to 40Gb enabling greater reliability and power usage for optimal data center efficiency and scalability.

Why we're bandwidth hungry

If we look at what’s driving the need for increased bandwidth, we’ll find growing densities within virtualized servers which have had an evolving nature on north-south and east-west traffic. A massive shift in machine-to-machine traffic has resulted in a major increase in required network bandwidth. The arrival of faster storage in the form of solid-state devices such as Flash and NVMe is having a similar effect. We find the need for increased bandwidth all around us as we intertwine our lives deeper with technology more and more each year. Leading this charge is Artificial Intelligence (AI) workloads, which necessitate solving complex computations. This requires fast and efficient data delivery of a vast amount of data sets. Deploying networks at speeds of up to 100Gb/s helps reduces the necessary training times. The use of lightweight protocols such as Remote Direct Memory Access (RDMA) can help to complete the fast exchange of data between computing nodes and streamlines the communication and delivery process.

The rapid increase in the performance of graphics hardware, coupled with recent improvements in its programmability, has made graphics accelerators a compelling platform for computationally demanding tasks in a wide variety of application domains. GPU based clusters are used to perform compute intensive tasks, like finite element computations, and Computational Fluids Dynamics. Since the GPUs provide high core count and floating point operations capability, a high-speed networking such as M-series is required to connect between the GPU platforms, in order to provide the needed throughput and the lowest latency that GPU-to-GPU communications require.

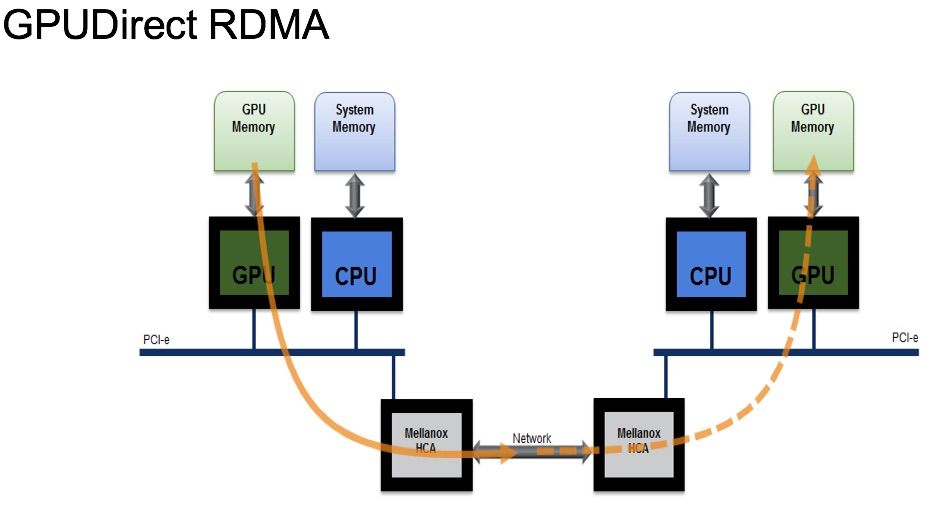

Figure 1. GPUDirect Remote Direct Memory Access (RDMA)

The main performance issue with deploying clusters consisting of multi-GPU nodes involves the interaction between the GPUs, or the GPU-to-GPU communication model. Prior to the GPU-Direct technology, any communication between GPUs had to involve the host CPU and required buffer copies. CPU involvement in the GPU communications and the need for a buffer copy created bottlenecks in the system, slowing the data delivery between the GPUs. New GPUDirect technology enables GPUs to communicate faster by eliminating the need for CPU involvement in the communication loop and eliminating buffer copies. The result is increased overall system performance and efficiency by reducing the GPU-to-GPU communication time by 30%. This allows for scaling out to 10s, 100s or even 1,000s of GPUs across hundreds of nodes. To accommodate this type of scaling and for it to work flawlessly, requires a network with little to no dropped packets, the lowest latency and the best congestion management. The M-Series provides just that.

The HPE StoreFabric M-Series Ethernet switches offer a very compelling argument for why it may be the best and most efficient networking solution for high-performance workloads. Artificial Intelligence, Machine Learning (ML), Data Analytics (DA) and other demanding data-driven workloads mandate amazing computational capabilities from servers and the network. Today’s screaming fast multi-core processors provide a solution by accelerating computational processing. Consequently, all this places a huge demand on the network infrastructure.

Let’s walk through a checklist of what makes the M-Series Ethernet switches the best networking solution on the market today for any high-performance workload—and you’ll see why the M-Series is poised to be a great platform for moving AI/ML data sets:

- Highest bandwidth—25/50 and 100Gb Ethernet speeds

- ZERO packet loss—Ensured reliable and predictable performance

- Highest efficiency—Built-in accelerators for storage, virtualized, and containerized environments

- Lowest latency—1.4x better than the competition, true cut-through latency

- Lowest power consumption 1.3X better than the competition

- Dynamically shared, flexible buffering—Excellent flexibility to dynamically adapt and absorb micro-bursts and avoid congestion

- Advanced load balancing—Improved scale and availability

- Predictable performance—3.2Tb/s switching capacity offers wire-speed performance

- Low cost—Superior value

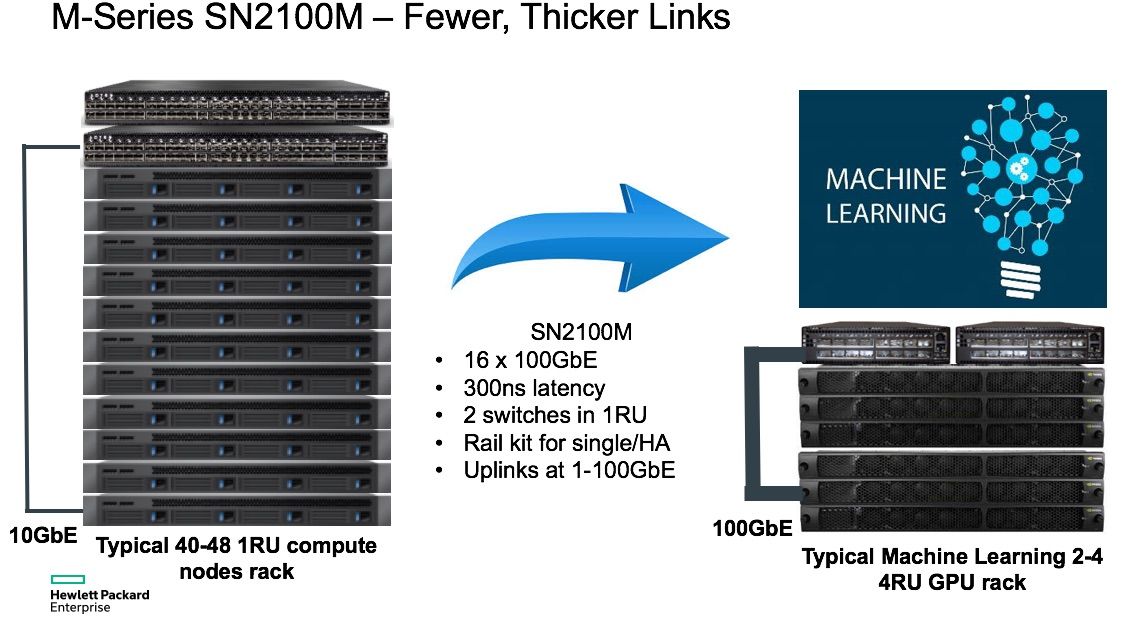

With unique half-width switch configuration and break-out (splitter) cables offered by the M-Series further extend its feature set and functionality. Breakout cable enables a single 100Gb port on a switch to breakouts or split into two or four 25Gb ports linking one switch port to up to four adapter cards in servers, storage or other subsystems. At a reach of 3 meters, they accommodate connections within any rack.

Combine this with half-width form factors and it provides the ideal combination of performance, rack efficiency, and flexibility for today’s storage, hyperconverged, and machine learning environments. Many of these environments don’t require 48 ports in a single rack, especially at 25/100GbE speeds. Typically, for redundant purposes, two 48+4-port switches are installed at the TOR, with more than half of each switch ports unutilized and two rack U of space taken up. The StoreFabric M-Series 2100 with a half-width design, deliver high availably and increased density by allowing side-by-side placement of two switches in a single U slot of a 19-inch rack. Unique break-out cables can be used to split ports up to a 1-to-4 ratio for a port density of up to 64 10/25/50GbE ports in a single rack U. This provides both high port density and allows for higher capacity and efficiency, simplifying scale-out environments, saving on total cost of ownership—with an easy migration path to next-gen networking.

Figure 2. 100GbE provides wider transport lanes for increased density and higher bandwidth with fewer cables and switches.

Whether you are or are not supporting AI/ML or DA workloads today, most modern data centers are highly virtualized and running on solid-state storage. NVMe storage is just around the corner and slated to further push performance standards. For both fast NVMe storage and all computational inferencing workloads, predictable and reliable data delivery depends on fast and accurate data delivery and this starts at the network. The M-Series Ethernet switch meets and exceeds the most demanding criteria for wire-speed performance so real-time decisions from the most robust cognitive computing applications can thrive.

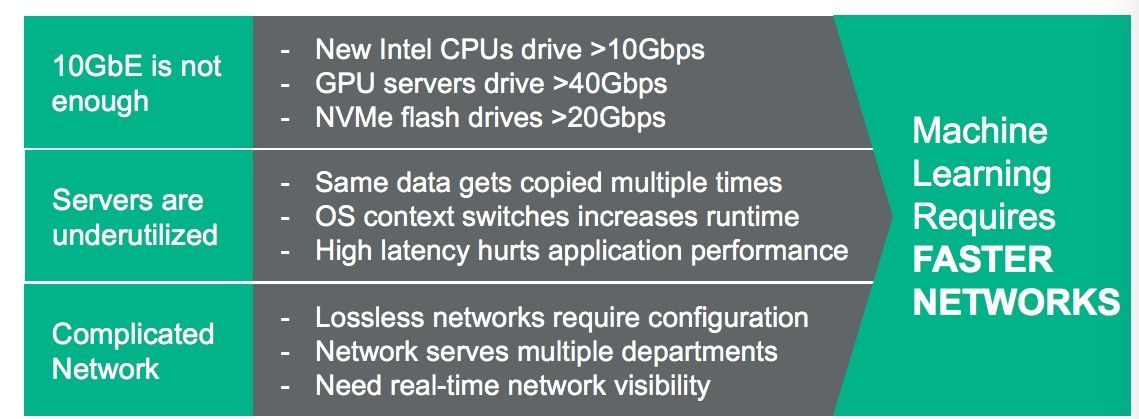

Figure 3. Artificial Intelligence, Machine Learning and Data Analytics require faster networks.

Rapid improvement of data center computing and low-latency storage solutions have transferred data center performance bottlenecks to the network. 10GbE networks only create delays and will become a major constraint in network performance. Therefore, today’s data centers should be designed to handle this anticipated bandwidth with a low latency, lossless Ethernet fabric. Beyond the performance advantages, the economic benefits of running NVMe and AI workloads over Ethernet are substantial. M-series switches deliver this unbeatable performance at an even more unbeatable price point, yielding outstanding ROI.

Flexible port counts and cable options allow up to 64 fully redundant 10/25/50 GbE ports in a 1U rack space, M-Series is a game changer for your state-of-the-art data center—so you can both maximize value and future-proof the data center.

Learn more about HPE Storage Networking.

Featured articles:

- How can machine learning help your organization

- Why the fuss about AI?

- How supercomputing democratizes AI

- Want to know the future of technology? Sign up for weekly insights and resources

1Fall 2017, Data Center Ethernet Switch Market: Revenue, Crehan Research, Inc.

Meet Around the Storage Block blogger Faisal Hanif, Product Management, HPE Storage and Big Data. Faisal is part of HPE’s Storage & Big Data business group leading Product Management & Marketing for next generation products and solutions for storage connectivity, network automation & orchestration. Follow Faisal on Twitter: @ffhanif

xxx

- Back to Blog

- Newer Article

- Older Article

- haniff on: High-performance, low-latency networks for edge an...

- StorageExperts on: Configure vSphere Metro Storage Cluster with HPE N...

- haniff on: Need for speed and efficiency from high performanc...

- haniff on: Efficient networking for HPE’s Alletra cloud-nativ...

- CalvinZito on: What’s new in HPE SimpliVity 4.1.0

- MichaelMattsson on: HPE CSI Driver for Kubernetes v1.4.0 with expanded...

- StorageExperts on: HPE Nimble Storage dHCI Intelligent 1-Click Update...

- ORielly on: Power Loss at the Edge? Protect Your Data with New...

- viraj h on: HPE Primera Storage celebrates one year!

- Ron Dharma on: Introducing Language Bindings for HPE SimpliVity R...