- Community Home

- >

- Storage

- >

- Around the Storage Block

- >

- HPE 3PAR Memory Driven Flash on the HPE 3PAR array...

Categories

Company

Local Language

Forums

Discussions

Forums

- Data Protection and Retention

- Entry Storage Systems

- Legacy

- Midrange and Enterprise Storage

- Storage Networking

- HPE Nimble Storage

Discussions

Discussions

Discussions

Forums

Discussions

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

- BladeSystem Infrastructure and Application Solutions

- Appliance Servers

- Alpha Servers

- BackOffice Products

- Internet Products

- HPE 9000 and HPE e3000 Servers

- Networking

- Netservers

- Secure OS Software for Linux

- Server Management (Insight Manager 7)

- Windows Server 2003

- Operating System - Tru64 Unix

- ProLiant Deployment and Provisioning

- Linux-Based Community / Regional

- Microsoft System Center Integration

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Community

Resources

Forums

Blogs

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Receive email notifications

- Printer Friendly Page

- Report Inappropriate Content

HPE 3PAR Memory Driven Flash on the HPE 3PAR array with Oracle Databases

Introduction to Memory Driven Flash

NVMe is a new protocol to better access PCI-e attached nonvolatile memory called Storage Class Memory (SCM). HPE 3PAR Memory Driven Flash uses Storage Class Memory and NVMe to produce a level 2 (L2) caching between the storage array, DRAM and the SSDs. This L2 caching process enables a mechanism to access data to be read into DRAM at a lower latency than having to go all the way back to SSDs to get the data again. It’s a helper in between the SSDs and DRAM.

The HPE 3PAR array 9450 and 208xx storage arrays are equipped with Memory Driven Flash modules. Data that is likely to be read with random small block reads from 3PAR virtual volumes is enabled for L2 caching. The result is lower latency reads in the storage array and less latency variations between reads. The latency savings is in the order of microseconds but over time and millions, even billions of reads, can produce effective benefits to a critical workload that is latency sensitive.

This blog gives a general overview of Memory Driven Flash when used with Oracle database workloads by explaining the important aspects, and providing application, product technical videos and an appendix of example 3PAR CLI commands.

General Data Flow for L2 Caching with MDF

The target workload characteristics with Oracle needed to see benefits from HPE 3PAR Memory Driven Flash are any IO operations that can potentially result in random reads with a block size of 64k or less in increments of 4k. A 4k block size is the smallest that Memory Driven Flash will recognize.

The Oracle demo video demonstrates the effect array read latency has on wait times.

Reviewing Figure 1 we can see the comparison of processes when we do normal IO versus when we are using Memory Driven Flash.

3PAR without Memory Driven Flash

For the purposes of context, the explanation is simplified. In this example the SSDs are symbolizing an entire virtual volume which includes multiple SSDs. Looking at the left side of the diagram we see normal IO where the IO reads come to the controller from the SSDs into DRAM. The writes go into DRAM from the controller and are acknowledged to the OS. These writes get written to SSDs as well. This is the normal operation of the 3PAR on a per node basis. Much more is happening to maintain cache coherency between the array nodes.

3PAR with Memory Driven Flash

Looking at the right side of Figure 1 we see the 3PAR using Memory Driven Flash. Each node on the array will have MDF module installed. This adds in an L2 cache component in the IO process. Reads can come from SSDs as well as from Storage Class memory through NVMe. For a specific data location, the first read will always come from the SSDs. Data can be de-staged from DRAM into Memory Driven Flash. Now when this data is again needed, instead of having to access again from SSD it can be accessed from DRAM or Storage Class Memory. This results in more effective data access within the storage array and can result in lower read latencies at the host running the Oracle workload. The Oracle video demonstrates how this resulting benefit can be seen at the host. On a 4 node array there will be 750GB x 4 in MDF for a total of 3TB of MDF Flash. The optimal working set size should be (Total MDF + Total DRAM) the array. Memory Driven Flash begins to be effectively used when DRAM fills and data begins to be de-staged to it. The ideal situation is when 100% of the working set fits into total cache.

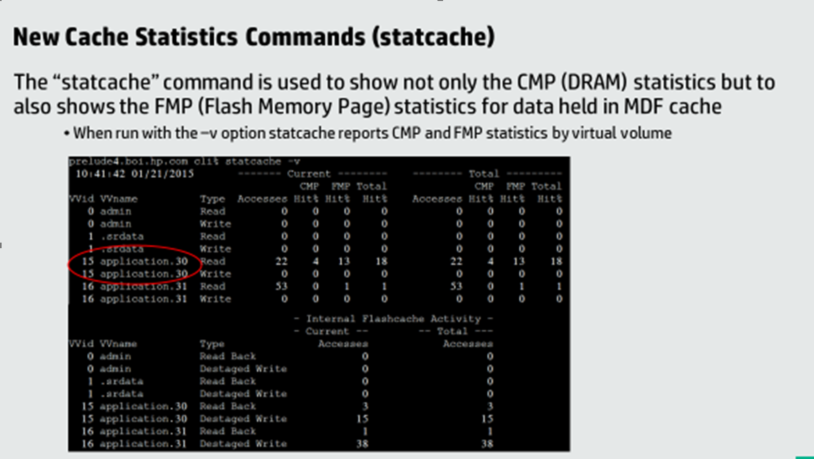

With real workloads such as Oracle and multiple instances running workloads, your storage expert will need to monitor the filling of the DRAM (CMP) and MDF (FMP) to understand the characteristics of the workloads running on the array.

Write activities remain the same as without MDF. There is no concept of a write hit from the host’s perspective.

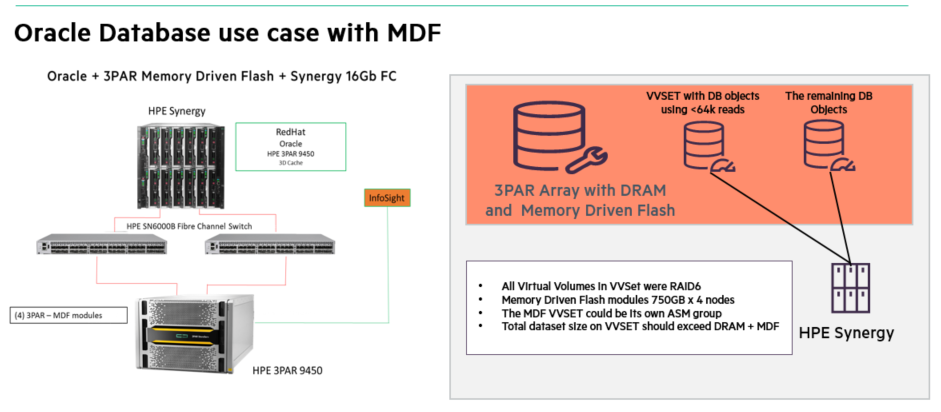

Example use case with Oracle database

Figure 2 below shows an example use case for MDF with an Oracle workload. We will start off by making the asumption that a set of database objects have been identified as being accessed by Oracle processes, and random reads of block sizes of 64k or less are being used(e.g. seeing excessive db file sequential read wait times.). For instance we can use an OLTP order entry workload doing a fair amount of reading and rereading of data. These database objects can be moved into their own ASM group which was created on a VVSET enabled to use HPE 3PAR Memory Driven Flash. The remainder of the database objects can reside on normal virtual volumes or VVSETs.

With Memory Driven Flash enabled, now these random reads have the ability to be destaged into MDF. When this data is referenced again it will be accessed up to 50% faster inside the array. Tests run in the HPE labs have shown up to a 37% decrease in wait times for Oracle user IO class waits like “db file sequential reads”. This can benefit the time it take some processes like small queries to execute. Since the latency is critically low in flash technologies already, the benefit is seen across many IO operations over time and MDF has the ability to reduce the latency time deviations to minimal.

Memory Driven Flash can be enabled selectively on specific VVs or enabled for the the entire array. If specific VV’s are being used, in the case of using Oracle ASM, put the VV’s into a VVSET and define the VVSET as its own ASM group. In the case of using fiesystems, define the file system in the VV or multiple VVs in a VVSET.

Oracle workload read latency demo video

There are some Oracle stats which are available in the AWR report which fall into the wait class of User I/O. The Oracle video embedded in this blog discusses a few of them. One example is “db file sequential read” waits. These waits can often be seen in the Top 5 of the AWR report. There are 3 important considerations:

1. The number of occurrences of the wait.

2. The actual wait time in microseconds

3. The fact that there are multiple factors that can affect the wait time, one of which can be the read latency from the array.

Always engage your Oracle AWR expert to analyze the Oracle wait times and compare these latencies seen by your storage experts if there is any concern about the correlation to storage.

Note: A new white paper from Solutions Engineering is pending. A reference to it will be added to this blog when it is released.

The Oracle workload demo video below gives a real-time demonstration of how MDF decreases latency resulting in lower wait times. The tool used in the demo is an Oracle heat map tool that visually shows the latency metrics.

Memory Driven Flash product application video

This Memory Driven Flash video gives an overview of the product itself.

Memory Driven Flash product technical video

Please blog any questions you may need addressing or just any general discussion is ALWAYS welcome!

Best Regards

Todd Price – HPE Technical Marketing Engineering – Oracle Solutions

Appendix

Examples s of configuration commands (3PAR CLI)

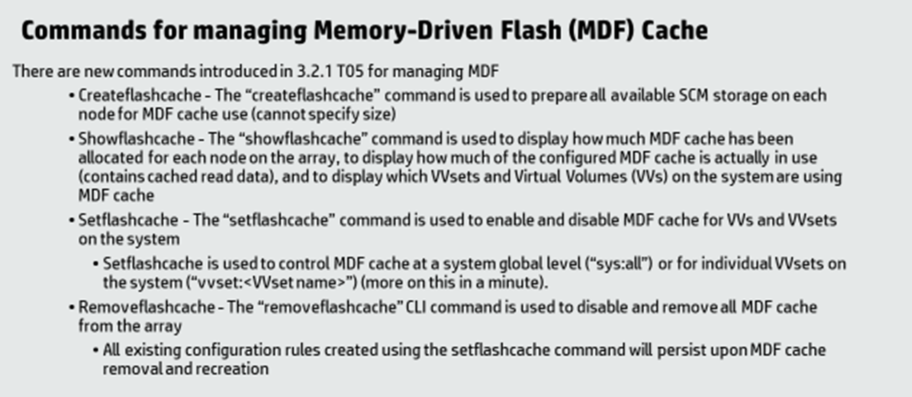

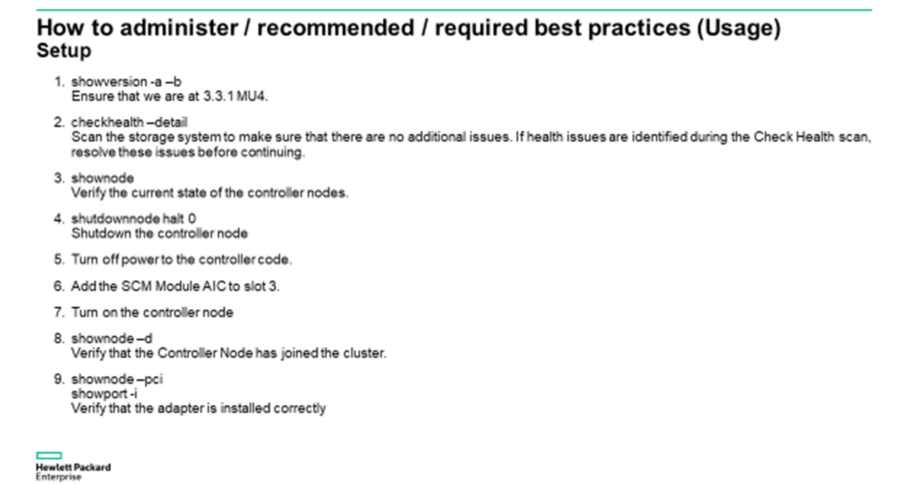

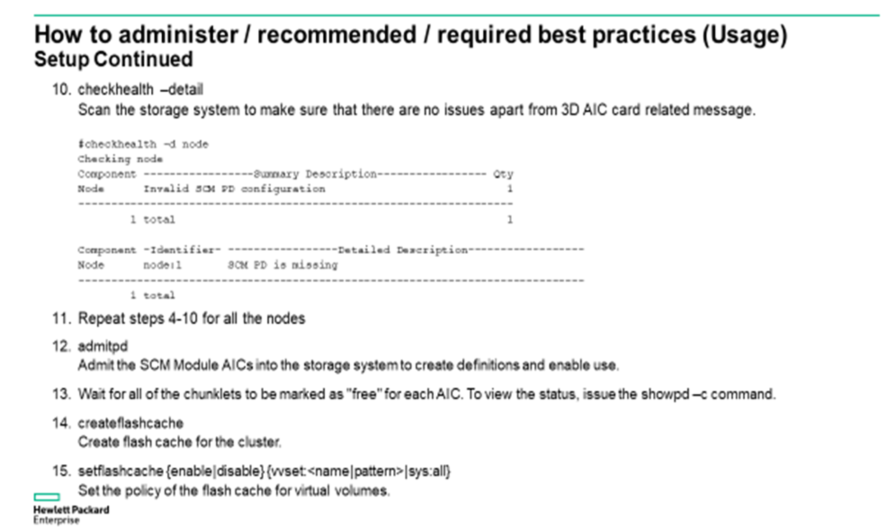

Below are some example 3PAR CLI commands for managing Memory Driven Flash. Using these commands assumes the MDF modules have been installed on the supported 9450 or 208x0 array and 3PAR software release T05 is present.

- Back to Blog

- Newer Article

- Older Article

- haniff on: High-performance, low-latency networks for edge an...

- StorageExperts on: Configure vSphere Metro Storage Cluster with HPE N...

- haniff on: Need for speed and efficiency from high performanc...

- haniff on: Efficient networking for HPE’s Alletra cloud-nativ...

- CalvinZito on: What’s new in HPE SimpliVity 4.1.0

- MichaelMattsson on: HPE CSI Driver for Kubernetes v1.4.0 with expanded...

- StorageExperts on: HPE Nimble Storage dHCI Intelligent 1-Click Update...

- ORielly on: Power Loss at the Edge? Protect Your Data with New...

- viraj h on: HPE Primera Storage celebrates one year!

- Ron Dharma on: Introducing Language Bindings for HPE SimpliVity R...