- Community Home

- >

- Storage

- >

- Around the Storage Block

- >

- HPE Storage Solutions for SAP HANA Native Storage ...

Categories

Company

Local Language

Forums

Discussions

Forums

- Data Protection and Retention

- Entry Storage Systems

- Legacy

- Midrange and Enterprise Storage

- Storage Networking

- HPE Nimble Storage

Discussions

Discussions

Discussions

Forums

Discussions

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

- BladeSystem Infrastructure and Application Solutions

- Appliance Servers

- Alpha Servers

- BackOffice Products

- Internet Products

- HPE 9000 and HPE e3000 Servers

- Networking

- Netservers

- Secure OS Software for Linux

- Server Management (Insight Manager 7)

- Windows Server 2003

- Operating System - Tru64 Unix

- ProLiant Deployment and Provisioning

- Linux-Based Community / Regional

- Microsoft System Center Integration

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Community

Resources

Forums

Blogs

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Receive email notifications

- Printer Friendly Page

- Report Inappropriate Content

HPE Storage Solutions for SAP HANA Native Storage Extension (Article 2 in 3-part series)

In my previous article, HPE Storage Solutions for SAP HANA Data Tiering, we saw how SAP HANA environments benefit immensely with the implementation of data tiering. In this blog we will talk about how HPE Storage enables you to utilize the native storage extension technology.

SAP HANA Native Storage Extension or NSE, is a tiering technology that helps segregate hot and warm data without the need for an external hardware tier.

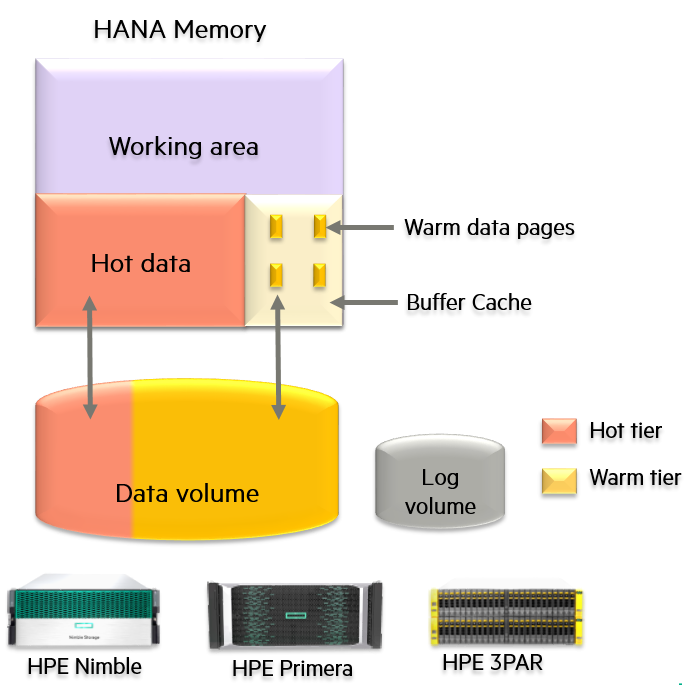

In principle, SAP HANA NSE extends the existing data volume in a SAP HANA system to store warm data. This frees up the memory for hot, mission, critical data. As seen in Figure 1, any SAP HANA implementation divides the main memory into two halves: the working area and that HANA hot data area. Once the HANA admin or the SAP application designates data into hot and warm, NSE flushes all the warm data to the underlying extended data volume. In addition, NSE creates a buffer cache in the HANA host data area to hold warm data temporarily. If the SAP application using HANA queries for warm data, it gets loaded page by page into this buffer cache. If subsequent queries are going to access these same warm data pages, they reside in the buffer cache for faster access and better query performance. NSE implementation does not impact the log volume sizing.

Column-loadable or page loadable data?

1. Increased HANA database capacity. With NSE, more data can be stored with the same HANA infrastructure. All it requires is to extend the storage volume and designating data into column and page–loadable.

2. Performance and resource segregation. Column-loadable, mission-critical data should be the most performant in the database, and NSE assures that the main memory is utilized in achieving this. Memory resources should not get wasted in the data which is not frequently accessed.

3. Lower TCO. With no extra hardware required in terms of compute and networking. NSE enables you to lower your TCO per TB of data.

HPE Storage supports NSE with its leading storage products, namely, HPE Primera, HPE Nimble and HPE 3PAR systems. These are SAP HANA TDI certified and they can be used as is with NSE. As expected, the query performance for column-loadable data will be better than page-loadable data. This is because for the warm data, pages get loaded from the disk. However, once they have been loaded in the buffer cache, subsequent queries will perform the same as in-memory hot data.

As an example, here is how NSE helps increase the total database size for SAP HANA, for a server with 2 TB memory:

|

DB and storage sizing without NSE |

NSE is employed with data divided in to page and column-loadable types |

||

|

Total RAM |

2TB |

Total RAM |

2TB |

|

Work area |

1TB (50% RAM) |

Work area |

1 TB (50% RAM) |

|

HANA hot data |

1TB |

Buffer cache |

200 GB |

|

Data volume |

1.2 x RAM =2.4 TB |

HANA hot data |

800 GB |

|

Total DB size |

1TB |

HANA warm data |

200 x 8 -16.8 |

|

|

|

Data Volume Size |

2.4 TB + 1.6 T = 4TB |

|

|

|

Total DB size |

800 GB +1.6 TB =2.4 TB |

This enables NSE to increase the total size of the database with the same amount of compute resources and just some additional storage.

For more details on the implementation, sizing and examples, please refer to the technical whitepaper I wrote or the Brighttalk session I gave on this topic.

These illustrate NSE implementation using TPC-H, an industry-standard decision support benchmark dataset. To use NSE, we first partition a table using date as the criteria into two partitions. The first partition is the data from the last two years, which holds the maximum value in most enterprises. The second partition holds all the remaining data prior to the last two years mark. This represents the usual data priority and value criteria that most enterprises have. Once the tables are partitioned, we assign the second partition to be page-loadable, making sure that the corresponding data gets shifted to the disk. The first partition, by default, remains column-loadable and in memory. Lastly, we show how SQL queries perform on both of these types of data.

Please - Do drop in a comment on how this solution fits you or your customer’s SAP environment and let us know if you have any comments or queries.

Until my next article -- Happy tiering!

Anshul Nagori

Hewlett Packard Enterprise

twitter.com/HPE_Storage

www.linkedin.com/in/anshulnagori/

hpe.com/storage

Anshul_Nagori

Meet HPE Blogger Anshul Nagori, Senior Worldwide Technical Marketing Engineer. Anshul works for the worldwide storage solutions team at HPE, and has more than 13 years of IT experience. He is a regular speaker at SAP and HPE events. His areas of focus include SAP HANA, SAS Analytics, storage, data management, and data protection solutions. Connect with Anshul on LinkedIn!

- Back to Blog

- Newer Article

- Older Article

- haniff on: High-performance, low-latency networks for edge an...

- StorageExperts on: Configure vSphere Metro Storage Cluster with HPE N...

- haniff on: Need for speed and efficiency from high performanc...

- haniff on: Efficient networking for HPE’s Alletra cloud-nativ...

- CalvinZito on: What’s new in HPE SimpliVity 4.1.0

- MichaelMattsson on: HPE CSI Driver for Kubernetes v1.4.0 with expanded...

- StorageExperts on: HPE Nimble Storage dHCI Intelligent 1-Click Update...

- ORielly on: Power Loss at the Edge? Protect Your Data with New...

- viraj h on: HPE Primera Storage celebrates one year!

- Ron Dharma on: Introducing Language Bindings for HPE SimpliVity R...