- Community Home

- >

- Servers and Operating Systems

- >

- HPE BladeSystem

- >

- BladeSystem - General

- >

- Virtual Connect FlexFabric connectivity to Nexus5K

Categories

Company

Local Language

Forums

Discussions

Forums

- Data Protection and Retention

- Entry Storage Systems

- Legacy

- Midrange and Enterprise Storage

- Storage Networking

- HPE Nimble Storage

Discussions

Discussions

Discussions

Forums

Discussions

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

- BladeSystem Infrastructure and Application Solutions

- Appliance Servers

- Alpha Servers

- BackOffice Products

- Internet Products

- HPE 9000 and HPE e3000 Servers

- Networking

- Netservers

- Secure OS Software for Linux

- Server Management (Insight Manager 7)

- Windows Server 2003

- Operating System - Tru64 Unix

- ProLiant Deployment and Provisioning

- Linux-Based Community / Regional

- Microsoft System Center Integration

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Community

Resources

Forums

Blogs

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

08-13-2014 08:56 AM

08-13-2014 08:56 AM

Virtual Connect FlexFabric connectivity to Nexus5K

Avi had a configuration question:

***********

Hi all,

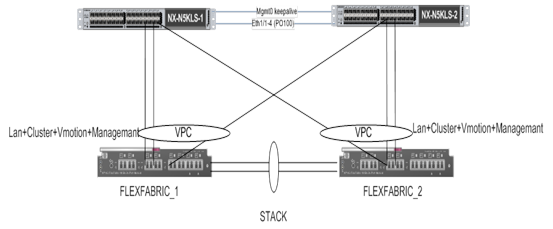

We want to implement the following connectivity between the VC FlexFabric and the Nexus5K.

Port x3 and x4 connected with LACP , each port to different Nexus , SUS for VM’s LAN.

Port x6 , no lacp, SUS for mgmt + vMotion. (there are no free ports on the FlexFbric module)

Now the network eng. Says that if the vPC peer between the two nexus fails, all the vPC connection from the secondary nexus will be disabled, but the single connection which is not vPC , remain linked (PORT x6) , because of that the smart link will not function and the MGMT+Vmotion will not worked.

They suggest to change the connectivity to this one .

I know it’s not the best practice from the VC FlexFabric and the VMware side , Also I thinks it’s not recommended to make ODD number aggregation.

Any comment how to implement this scenario ?

*************

Input from Robert:

The first configuration is common throughout VC environments. You may need to get more detail from the network engineer regarding the specific Nexus failure they are describing. The failure scenario being described is sometimes referred to as a black hole.

Typically if the vPC fails, then the Nexus port channels will dissolve. When the Nexus port channels dissolve, the VC X3/X4 port channels will also dissolve. Each VC would still have a surviving 10G linked-active path to forward traffic, and each would have one port linked-standby. Both X6 ports should remain in linked-active state and continue to forward traffic, unless the one of the Nexus disables the ports. This probably does not happen if I understand the failure correctly. When the vPC fails, there is no longer a peer connection. With no peer connection, there should be no potential for a loop between the adjacent Nexus. The Nexus should continue to forward traffic from X6 from both VC modules onto the next layer switching. I do not believe the Nexus will stop forwarding traffic on non-peer links when the vPC ports all fail.

I’m sure someone will correct me if my brief assessment is wrong.

*****************

Reply from Avi:

Thanks for reply,

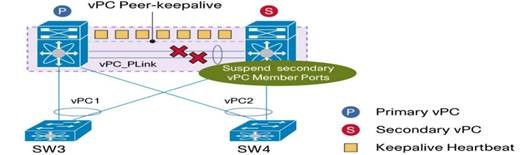

As far as I understand , If something went wrong with the vPC peer connection between the two nexus,

The secondary switch will suspend ONLY the uplink and the downlink ports that configure as vPC ports , But single port like x6 in our case, will get into orphan state , which mean that the port remain linked but no traffic allowed from this port. Because of that the smart link will not function and we will lose traffic.

**************

Input from Michael:

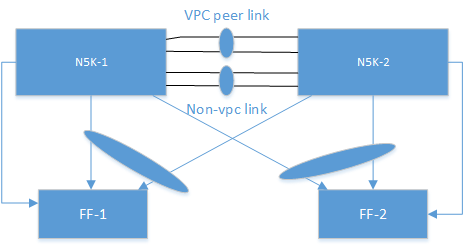

The network engineer’s assumption is correct. The Non-vPC ports will stay active with the peer-link down. However, this is by Cisco design and that traffic should still forward in a split brain scenario upstream (i.e. when the vPC link is down). But, the Cisco recommendation in your deployment scenario is to have a separate non-vPC link between N5Ks so that if the vPC peer link is down, that vMotion/management traffic can still forward east-west.

This is documented in their best practice guide: http://www.cisco.com/c/dam/en/us/td/docs/switches/datacenter/sw/design/vpc_design/vpc_best_practices_design_guide.pdf

“We recommend that you create an additional Layer 2 trunk port-channel as an interswitch link to transport

non-vPC VLAN traffic”

A quick topology of what I mean (sorry for the basic boxes):

This will solve the polarization issue with non-even links and maintain vMotion/Management traffic while the vPC peer link is down as well.

Let me know if you have any questions.

**************

Comments?