- Community Home

- >

- Software

- >

- HPE Ezmeral: Uncut

- >

- Mind the analytics gap: A tale of two graphs

Categories

Company

Local Language

Forums

Discussions

Forums

- Data Protection and Retention

- Entry Storage Systems

- Legacy

- Midrange and Enterprise Storage

- Storage Networking

- HPE Nimble Storage

Discussions

Discussions

Discussions

Forums

Discussions

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

- BladeSystem Infrastructure and Application Solutions

- Appliance Servers

- Alpha Servers

- BackOffice Products

- Internet Products

- HPE 9000 and HPE e3000 Servers

- Networking

- Netservers

- Secure OS Software for Linux

- Server Management (Insight Manager 7)

- Windows Server 2003

- Operating System - Tru64 Unix

- ProLiant Deployment and Provisioning

- Linux-Based Community / Regional

- Microsoft System Center Integration

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Community

Resources

Forums

Blogs

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Receive email notifications

- Printer Friendly Page

- Report Inappropriate Content

Mind the analytics gap: A tale of two graphs

When it comes to analytics, eliminating your company’s “insight gaps” and “execution gaps” is probably impossible, but closing the gap is not. One of the first things to be done is to realistically assess the situation and set out your organization’s goal.

In this first blog of my planned series, I want to explore this topic and talk about how organizations need to define their ambition for the scope and scale of analytics. I don’t want to get too hung up on terms here, so in this post I’m going to be using the term “analytics” to cover all disciplines and technologies as performed by a data scientist, including artificial intelligence (AI), machine learning (ML), deep learning (DL), natural language processing (NLP), or descriptive/prescriptive analytics.

Are we getting better at analytics?

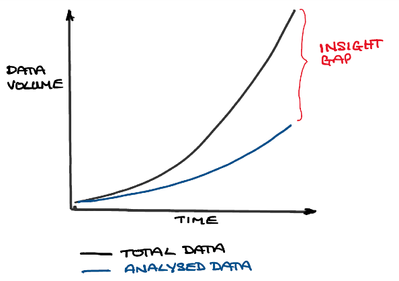

While the answer for some businesses may be "yes", the reality of the situation is that we just aren’t getting good enough—nor fast enough—to keep up with the demands being placed on us by the growth in data or business needs. Figure 1

Some have argued that the move away from traditional RDBMS-based data warehouse technologies (such as Oracle and Teradata to the Apache Hadoop ecosystem) has done much to change the picture. But while this may be true of one or two specific use cases in many organizations, the majority of the time it was little more than a technology and skills change. In most cases, the adoption of Hadoop has actually exacerbated the “insight gap” problem, as it has allowed significantly more data to come under management without delivering the corresponding increase in the scope and scale of analytics required to make sense of it.

More troubling, perhaps, is that in almost every large enterprise you will still find a massive enterprise data warehouse running alongside the data lake. So far from coming down, costs have actually increased! I’m sure that increase is going to be felt more acutely post COVID-19 when budgets are squeezed further, and existing Cloudera and Hortonworks implementations will be required to migrate to the Cloudera Data Platform (CDP). Not only have Cloudera license costs gone up significantly since they became a de facto monopoly, but there is also bound to be a fairly sizable migration cost and painful effort involved, which can only get in the way of making any more progress on closing the insight gap.

What’s driving the gap?

In most cases, we see significant issues around infrastructure provisioning, rigid tooling choices, and the unnecessary number of manual steps in the process. It’s typical for it to take months to provision a new data scientist with a suitable environment to start work. I’m certainly not trying to lay the blame on IT departments for this. Many complex steps are required to provision hardware, create and secure the cluster, provision the data, apply appropriate permissions on the data (as well as corporate tooling such as ETL and data governance), provision the corporate data science stack, and coordinate and manage patching.

Operationalizing analytics to close the gap and create business value

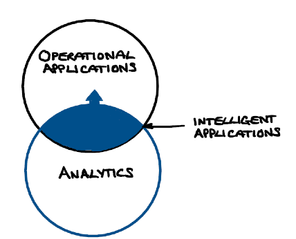

Analytics can be used in many settings in a business, but they create greater leverage when applied directly to business

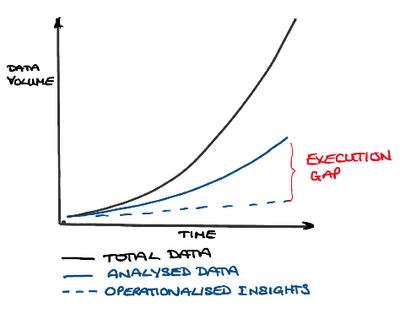

That brings us nicely to Figure 3, which illustrates the “execution gap”. That is, the gap between an organization’s ability to analyze and make sense of data and its ability to apply it in an operational setting. You will have seen this expressed more recently as the “operationalization problem” (often abbreviated to O16n).

You might well expect industries with a longer history of leveraging analytics to be much further ahead when it comes to integrating them into their applications, but in my experience, this is normally the exception and not the rule. Good

A lot of things need to happen in order to operationalize a typical machine learning model and put it into a production context. These will often include rebuilding the data pipeline and recreating the model, packaging into a microservice, managing control groups for A:B testing, determining alerting levels for model drift, applying changes to business inteliigence (BI) dashboards, and incorporating the model into the process in the application.

Given that these many challenges touch on data architecture, data science, application development, testing, operations, and platform, it’s not difficult to see how organization and collaboration are important challenges we must tackle if we are to address the “execution gap”. Many companies are starting to experiment with combining roles such as DataOps with MLOps or MLOps with DevOps to overcome these challenges. To have a marked effect, attention also needs to be paid to the supporting tools and infrastructure.

Defining your goal

Eliminating your company’s “insight gaps” and “execution gaps” is probably impossible, but closing the gap is not. One of the

As goals go, that might be sufficient. To truly make a dent, I really believe we need to aim high by setting a target of exponential growth in analytical capacity and capability, as Figure 4 illustrates. That way, even if you fail to hit the exponential target, we will have driven value from each of our investments along the way and the business will be in much better shape to react to future market dynamics. That’s the power of analytics when they’re used to drive the business.

If your goal is for exponential growth, then automating the process is key, as is thinking about how to remove waste and defects from the system. In short, we need to industrialize the process. That’s a topic I’m going to be tackling in future blog posts.

This is the first of two blogs that explore how IT budgets and focus needs to shift from business intelligence and data warehouse systems to data science and intelligent applications. You can read the second blog here:

Both set the stage for a subsequent series of blogs on industrializing data science. The best place to start is the first blog on the topic:

Other blogs in the series include:

- Industrialized efficiency in the data science discovery process

- Industrializing the operationalization of machine learning models

- Transforming to the information factory of the future

Doug Cackett

Hewlett Packard Enterprise

twitter.com/HPE_AI

linkedin.com/showcase/hpe-ai/

hpe.com/us/en/solutions/artificial-intelligence.html

Doug_Cackett

Doug has more than 25 years of experience in the Information Management and Data Science arena, working in a variety of businesses across Europe, Middle East, and Africa, particularly those who deal with ultra-high volume data or are seeking to consolidate complex information delivery capabilities linked to AI/ML solutions to create unprecedented commercial value.

- Back to Blog

- Newer Article

- Older Article

- SFERRY on: What is machine learning?

- MTiempos on: HPE Ezmeral Container Platform is now HPE Ezmeral ...

- Arda Acar on: Analytic model deployment too slow? Accelerate dat...

- Jeroen_Kleen on: Introducing HPE Ezmeral Container Platform 5.1

- LWhitehouse on: Catch the next wave of HPE Discover Virtual Experi...

- jnewtonhp on: Bringing Trusted Computing to the Cloud

- Marty Poniatowski on: Leverage containers to maintain business continuit...

- Data Science training in hyderabad on: How to accelerate model training and improve data ...

- vanphongpham1 on: More enterprises are using containers; here’s why.

- data science course on: Machine Learning Operationalization in the Enterpr...