- Community Home

- >

- HPE AI

- >

- AI Unlocked

- >

- Accelerate AI, ML, and analytics workloads with a ...

Categories

Company

Local Language

Forums

Discussions

Forums

- Data Protection and Retention

- Entry Storage Systems

- Legacy

- Midrange and Enterprise Storage

- Storage Networking

- HPE Nimble Storage

Discussions

Forums

Discussions

Discussions

Forums

Discussions

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

- BladeSystem Infrastructure and Application Solutions

- Appliance Servers

- Alpha Servers

- BackOffice Products

- Internet Products

- HPE 9000 and HPE e3000 Servers

- Networking

- Netservers

- Secure OS Software for Linux

- Server Management (Insight Manager 7)

- Windows Server 2003

- Operating System - Tru64 Unix

- ProLiant Deployment and Provisioning

- Linux-Based Community / Regional

- Microsoft System Center Integration

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Community

Resources

Forums

Blogs

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Receive email notifications

- Printer Friendly Page

- Report Inappropriate Content

Accelerate AI, ML, and analytics workloads with a unified platform

Learn how you can replace multiple stack complexity and accelerate AI/ML models into production with HPE Ezmeral Unified Analytics.

Today’s analytic platforms come with a good deal of promise, but enterprises continue to face challenges and struggle to achieve the full potential of existing platforms.[1] It’s not bad technology or bad people, but rather hurdles that are complicating any organization’s efforts.[2]

The top hurdles include:

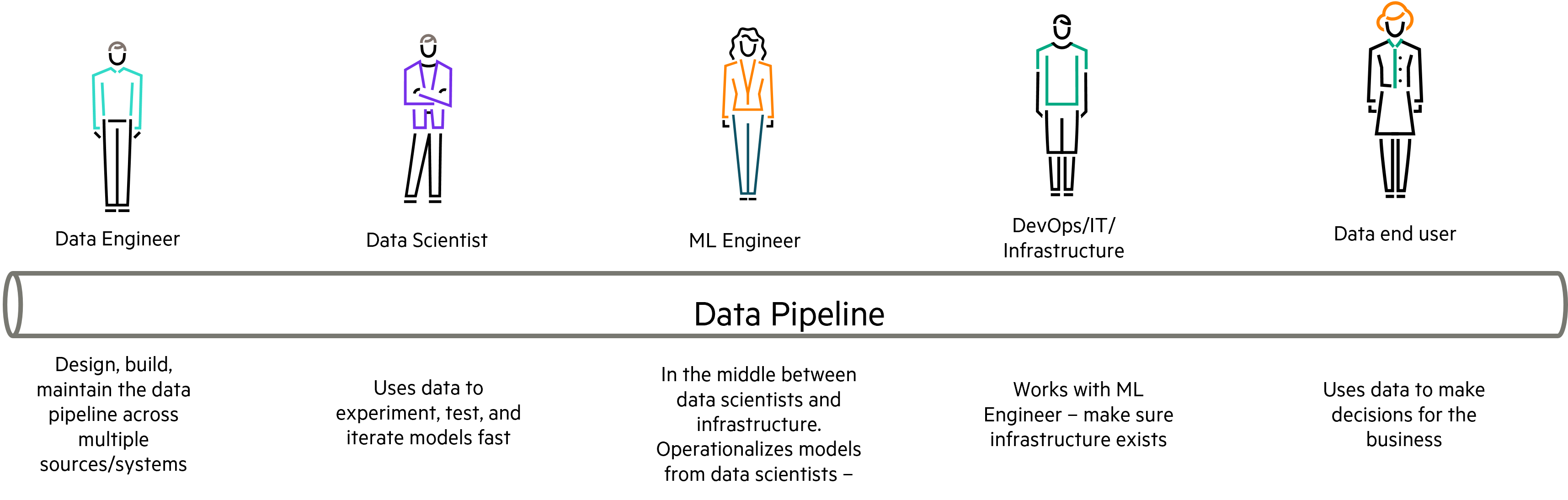

Data. Today’s modern enterprise operates in hybrid environments which means their data is distributed across multiple locations, formats, and types (i.e., data silos). Data engineers are the persona responsible for unifying this data, cleansing it, then creating the data pipelines used to train models. It’s normal for them to run a gauntlet of multiple approval layers just to access hybrid data which means pipelines are stalled placing a bulls eye on their back by data scientists.

Technical challenges. Data moves along a "pipeline" from one persona to the next before delivering outcomes to data end users. Each one of these personas has a preferred tool already in use and insist that this continues. Data scientists create models in one environment which rarely matches production. Machine learning engineers struggle to reproduce these models and frequently need to refactor the code to ensure productization and repeatability.

As you can imagine, there’s a lot of friction between the personas:

Open source software. Each persona has a preferred tool/framework and increasingly that solution is open source tooling such as Apache Airflow, Superset, Kubeflow, Spark, etc. These tools are powerful but were not designed to integrate even with their brethren which hinders cross team collaboration and model operationalization. As a result, outcomes to business owners are slow.

Solving these challenges requires an automated platform that reduces friction and increases collaboration and productivity. But with open source, the question becomes "Why would I buy a platform from a vendor when I can download the software and create my own platform?"

Building your own platform

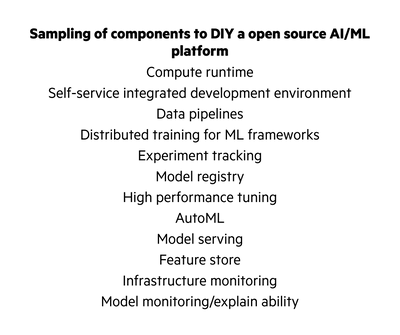

The breadth and depth of creating your own AI/ML platform is wildly underestimated. It isn’t as simple as cobbling together a couple of open source tools and by no means it is a simple three engineer, three-month project.

To begin with, there is a very long list of components that need to be integrated — and there are easily five to 10 different choices for each component. A small sample of those components is shown in Figure 2.

In the end you will discover:

- Tools don’t integrate well with each other

- Functionality overlaps in some areas while in others, gaps exist

- Some tools are open source, some proprietary

- Different tools target different personas

Bottom line: A do-it-yourself AI/ML open source platform is difficult!

But you have a dedicated team of crack devops, development, and site reliability engineers so you push forward only to discover that the next step is plumbing. Networking and communications across external data lake/warehouses, CI/CD processes, identity providers, infrastructure provisioning tools, tools to manage credentials and access controls, multi-tenancy, storage, and secrets. If you make it through all that, you’re ready to celebrate, right? Not so fast.

Every quarter each component releases a patch/upgrade to their own schedule which means your team of crack experts need to apply each update/patch individually then test to make sure that update hasn’t broken the stability of the platform, disrupt productivity, or expose the organization to risk.

Even though hybrid cloud environments have become the dominant deployment patterns for AI/ML and analytic workloads, there is no guarantee that open source software will function correctly. Enterprises building their own platform will need to “lift and shift” as well as rearchitect open source code to run in the cloud.[3]

Several sophisticated organizations with large platform and engineering teams have tried to accomplish this journey only to tell us that their platform wasn’t easy to use, couldn’t be replicated, and lacked end-to-end support from any vendor.

A unified platform is the answer

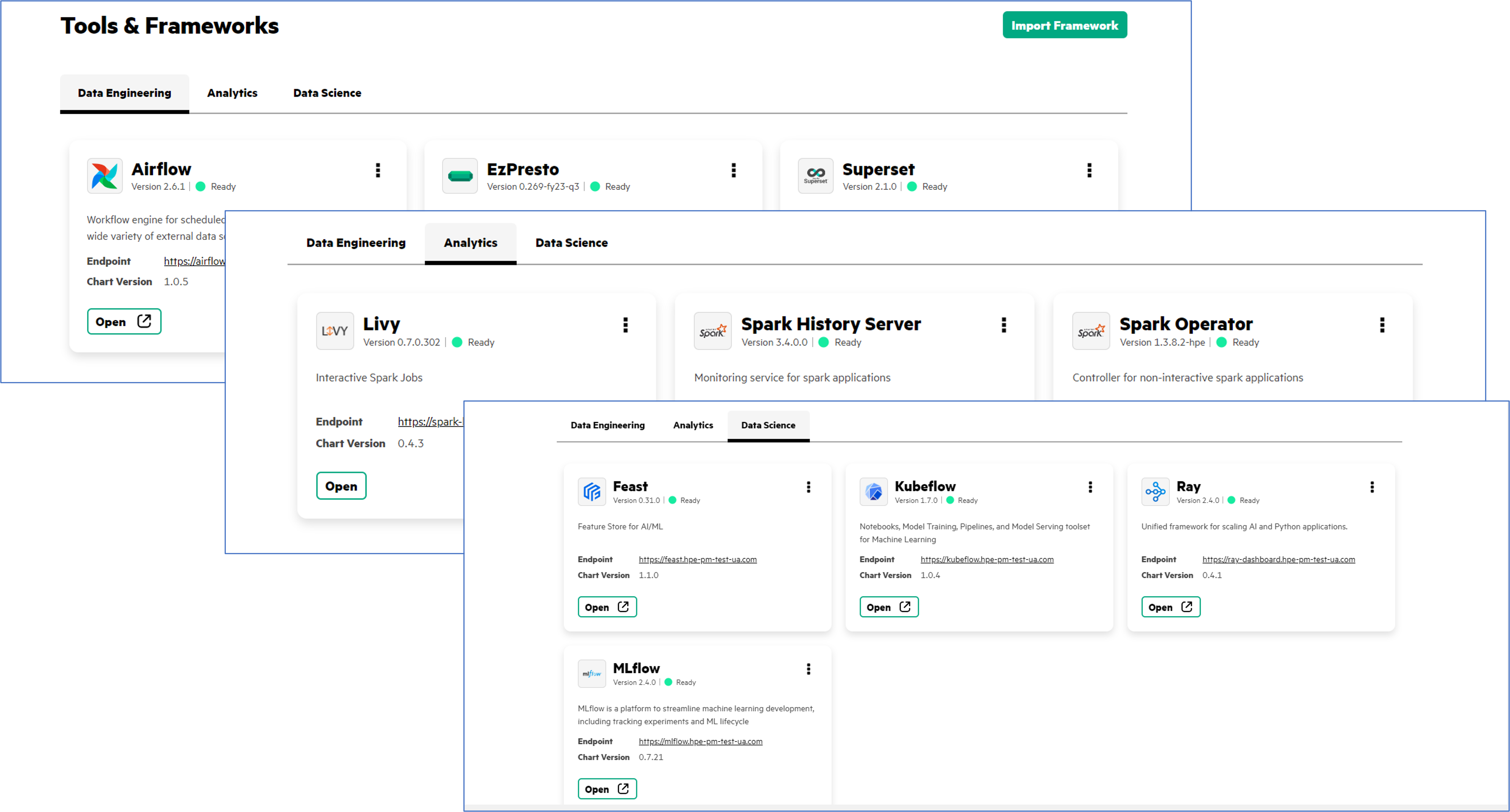

The alternative is a unified platform that spreads a single stack across on-premises and cloud environments. An approach that empowers developers and data science professionals to work on multiple use cases from a consistent environment using existing open source tooling.

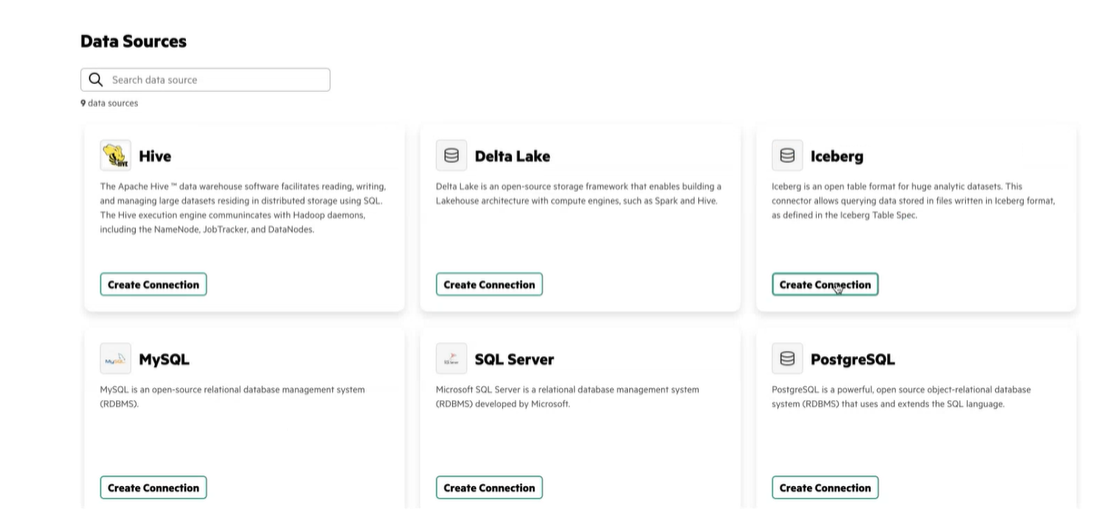

That is exactly what HPE Ezmeral Unified Analytics Software does. It’s a single solution stack for hybrid analytics and AI/ML workloads that empowers developers and data science professionals to work on multiple use cases using their preferred tools. A solution that replaces multiple stack complexity and allows models to be created and tested in production-like environments. It reduces complex hand-offs across the data pipeline with automation and converts work into containers allowing pipelines to work across hybrid infrastructure without code refactoring.

An import feature (green buttons in Figure 3) allows teams to ingest third-party and custom applications as needed. Connectors are available for Snowflake, Microsoft MySQL, Delta Lake, Teradata, and Oracle as well as popular structured and unstructured data sources.

Organizations that adopt a unified analytics platform benefit from open source tools that integrate seamlessly, comes with simplified management while running smoothly across a hybrid cloud environment.

See how you can successfully deploy data science and analytic workloads with HPE Ezmeral Unified Analytics Software. Watch the video to see the solution in action, then learn more here.

Joann Starke

Hewlett Packard Enterprise

twitter.com/HPE_Ezmeral

linkedin.com/showcase/hpe-ezmeral

hpe.com/software

[1] Unifying Analytics Across a Hybrid Cloud Environment,” S&P Global (formerly 451), May 2023.

[2] Top 10 reasons why AI projects fail, Cognilytica

[3] Unifying Analytics Across a Hybrid Cloud Environment, S&P Global (formerly 451), May 2023.

JoannStarke

Joann is an accomplished professional with a strong foundation in marketing and computer science. Her expertise spans the development and successful market introduction of AI, analytics, and cloud-based solutions. Currently, she serves as a subject matter expert for HPE Private Cloud AI. Joann holds a B.S. in both marketing and computer science.

- Back to Blog

- Newer Article

- Older Article

- Dhoni on: HPE teams with NVIDIA to scale NVIDIA NIM Agent Bl...

- SFERRY on: What is machine learning?

- MTiempos on: HPE Ezmeral Container Platform is now HPE Ezmeral ...

- Arda Acar on: Analytic model deployment too slow? Accelerate dat...

- Jeroen_Kleen on: Introducing HPE Ezmeral Container Platform 5.1

- LWhitehouse on: Catch the next wave of HPE Discover Virtual Experi...

- jnewtonhp on: Bringing Trusted Computing to the Cloud

- Marty Poniatowski on: Leverage containers to maintain business continuit...

- Data Science training in hyderabad on: How to accelerate model training and improve data ...

- vanphongpham1 on: More enterprises are using containers; here’s why.

-

AI

18 -

AI-Powered

1 -

Gen AI

2 -

GenAI

7 -

HPE Private Cloud AI

1 -

HPE Services

3 -

NVIDIA

10 -

private cloud

1