- Community Home

- >

- Storage

- >

- Midrange and Enterprise Storage

- >

- StoreVirtual Storage

- >

- Re: VSA design and performance

Categories

Company

Local Language

Forums

Discussions

- Integrity Servers

- Server Clustering

- HPE NonStop Compute

- HPE Apollo Systems

- High Performance Computing

Knowledge Base

Forums

- Data Protection and Retention

- Entry Storage Systems

- Legacy

- Midrange and Enterprise Storage

- Storage Networking

- HPE Nimble Storage

Discussions

Knowledge Base

Forums

Discussions

- Cloud Mentoring and Education

- Software - General

- HPE OneView

- HPE Ezmeral Software platform

- HPE OpsRamp Software

Knowledge Base

Discussions

Forums

Discussions

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Community

Resources

Forums

Blogs

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

05-11-2013 02:08 PM - last edited on 01-08-2014 11:21 PM by Maiko-I

05-11-2013 02:08 PM - last edited on 01-08-2014 11:21 PM by Maiko-I

VSA design and performance

I'm considering HP VSA for storage refresh. I have 3 eixsting identically configured HP DL380 G7 running vSphere 5.1 which is backeneded by a NetApp FAS2020A. The DL380's have no internal storage, vSphere runs off SD card. From a networking standpoint I have 2x2910al's with the 10GbE interconnect kit. Each DL380 hase the onboard NIC and an add-in 4 port NIC. 1 have 2 pNIC's dedicated to NFS communication, 2 pNIC for VM Network, and 2 pNIC for management and vMotion. In all three instances, there is 1 pNIC to Switch1 and 1 pNIC to Switch2. Each pair of pNIC's has it's own vSwitch with teaming set to route based on port ID. This gives me redundancy on pNIC and switch side, but only 1Gig of throughput.

The NetApp is active/active controllers with each controller having it's 2 ports in an LACP team connected to a single switch with a matching LACP trunk, so each controller has 2Gig throughput capability.

The VSA configuration would intiailly utilize 2 of the DL380 G7's with 16x900GB drives in RAID10, 1 VSA per host, and Network RAID10 between the 2 hosts. The 3rd host is currently being used at another site, so i's not part of the mix. My first question is in regards to adding the 3rd host into the VSA configuration. My only options with 3 hosts would be to move from NR10 to NR5, or do NR10+1, is this correct? NR5 would give me more usable space, but what will the impact on performance be?

My next question is in regards to networking performance and design. As i've been reading up on HP VSA, the recommendation seems to be 1 vNIC to 1 pNIC mapping, which yields 1Gig only with my currnet hardware. Given that this single 1Gig link would need to accomodate network RAID and communication between VM's and the storage, how much of a bottleneck is 1Gig going to be? What would be involved in going beyond 1Gig, without having to move to 10Gig?

P.S. This thread has been moved from HP 3PAR StoreServ Storage to HP StoreVirtual Storage / LeftHand. - Hp Forum Moderator

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

05-11-2013 07:35 PM

05-11-2013 07:35 PM

Re: VSA design and performance

If you add a thrid node to a NR10 LUN, you will not need to change anything and the LUN will restripe itself to fit across all three nodes and your total available cluster size will increase. NR is a bit different than disk raid in that NR10 is really just "single mirror data" whcih means that your data on the LUN will be copied to two nodes of your cluster. You can have an even or an odd number and NR10 won't care. I would not recomend NR5 for production and NR10+1 is "double mirror data" which makes sure you have three copies of the data which is helpful when you have a multi-site cluster or are ultra-paranoid in a standard cluster that has at least three nodes.

For two nodes, 1Gb is probably enough, especially when your VSA and VMs are on the same host. I'm not an esx guy so I'll leave that to others to comment there.

Hopefully those hdds are 10k or 15k drives as 7.2k can be a bit slow and VSAs aren't a magic sauce that will suddenly make slow disks fast.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

05-11-2013 08:00 PM

05-11-2013 08:00 PM

Re: VSA design and performance

Disks would be 10K SAS. With the initial config of 16x900GB in RAID10 on a host, the raw space will be 7.2TB per host. With NR10 in this config, I will maintain 7.2TB usable across the VSA cluster, correct? Now, if I add a 3rd host with the same 16x900GB RAID10 config, and I stick with NR10, what does my usable space in the VSA cluster become?

A 3 host NR10 can still only tolerate 1 host failing, and it can be any host, correct? To tolerate more than 1 host failing with NR10, you would need 4 hosts, and this would allow for any 2 host to fail?

Is the 10TB per VSA limit strictly for the local disk space you give to a VSA, or does it also limit aggregated space in a cluster?

In regards to IOPS: I can run an IOPS calcualtor on the single host 16x900GB 10K SAS RAID10 hardware config and get a number based on my workload. For this config, with a single hostt, no VSA, I know I can meet my IOPS demand at 95th percentile. When I then add a 2nd host with identical config, and use VSA with NR10, and assuming 1Gig link for VSA communication, what happens to IOPS? What about when I add a 3rd host?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

05-13-2013 03:23 AM

05-13-2013 03:23 AM

Re: VSA design and performance

3 hosts with 7.2 TB usable storage each would end up with 10.8 TB total space in the cluster as long as all volumes in that cluster use NR10.

A cluster has a total capacity of <smallest node capacity> * <number of nodes>. NR10 stores all data twice, so the space needed for a given LUN size is twice that of the LUN size. NR10+1 stores all data three times, so the space needed is three times as high.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

05-13-2013 05:57 AM

05-13-2013 05:57 AM

Re: VSA design and performance

everythign dirk said was correct.

For two and three node NR10 setups, you can only lose one node before LUN access is interrupted. When you go to 4+ nodes you can sustain more failures just like traditional hardware raid10 (only difference is NR10 allows you to have an odd number of nodes while hardware doesn't).

The 10TB limitation for a VSA is whatever you present to the VSA so raw disks can be larger if you present the VSA with a hardware raid drive. For hyper-v, you can present the VSA with either a .hvd/vhdx or a passthrough disk. I"m torn on which is better and I think it depends on your underlying hardware and your own preferences.

Did you say if this is for esx or hyper-v? The IOPS for multi-node calability will depend on the DSM you are using w/ the HP DSM being the most scalable, but only available on M$ systems. I would talk with a VAR or an HP sales rep if you are concerned about planning, but it does scale similarly to standard hardware raid10. The catch is that writes have to go across two nodes which means write to one node and have that node copy to the other node and then confirm which will mean that you need 2x the bandwidth for writes, but the flip side is reads can happen from any node so your bandwidth for reads is the total bandwidth of the connections of the nodes that hold your data (for NR10 that is typically 2Gb assuming you have 1Gb per node). Basically, the design of this SAN gives huge venefits to read operations and there is a slight performance hit on writes, but generally this is not an issue unless your SAN is really really heavy on writes over reads (which more aren't).

Best thing about the VSA solution is if you need the hardware anyway you can always test the VSA solftware for free w/ 100% function before you buy the software. I would suggest going that route with maybe a scaled down hardware setup to test if you like the SAN or not. For me, I'm a bit upset that HP doesn't support anything like trim/unmap which means that there is no way for thin provisioned drives to shrink or stay small over time! That and lack of SMI-S support will eventually be a deal-breaker for my next SAN purchase and you might want to consider that and talk with HP about their plans on them as well.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

05-13-2013 03:40 PM

05-13-2013 03:40 PM

Re: VSA design and performance

This is VMware environment, ESXi 5.1 Lack of TRIM/UNMAP as of 10.5 release is concerning, but I've been living with no Thin Provisioning of storage volumes (never enabled it)for almost 3 years now and I've managed fine, so I can continue to deal without TRIM/UNMAP.in the future. However, I would consider it to be somewhat of a poor investment for new storage to not support TRIM/UNMAP, should I want to use thin provisioned LUNs.

I run about 65/35 read/write, so nothing too unusual from a typical mix. I'm a little concerned about only single GigE link for VSA communication, even with it being 2Gb for reads. A single dual port 10GigE NIC in each hsot would let me go direct connet 10GigE for up to 3 hosts on 10GigE between them, and then I can use 1GigE for the failover option should there be NIC failures. With only 2 hosts, I can use 10GigE as failover as well. I would likely go the DAC 10GigE route for VSA because it's not a lot of cost for a lot more bandwidth.

For alternatives to HP VSA, the options look to be VMware, StarWind and StorMagic. I need to do more comparison between the four to see where the trade-offs exist.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

05-14-2013 08:54 AM

05-14-2013 08:54 AM

Re: VSA design and performance

your workload profile looks right in line with the VSA design. If you wanted more bandwidth and don't want to go 10Gb, you can always use LCAP if your switches can handle it. The VSA's limitation is it can only use one vNIC (I believe), but it does not have a software limitation to the amount of bandwidth that vNIC can use so if you can provide it with 2+Gb then you will see that benefit in the VSA.

I'm definitely concerned about the future for the VSA line w/ HP as their last TWO "major" upgrades (v10 and v10.5) really added very little new features beyond official support for 2vCPUs (which already worked anyway). The other VSA solutions are interesting alternatives. If you are really married to ESX, vmware's VSA might be the way to go if the price is right, but I don't think it has full feature pairity yet and I thought Starwind ran on top of windows last time I checked which isn't idea if you are an ESX shop. An alternative is also the built in ISCSI from M$ 2012 if its features fit your need.... its not as advanced as HP's offering is today, but if this line is dead and the M$ one is actively developed, it will be a great solution (especially given the fact it shouldn't cost you any more than the licenses you already have to buy!).

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

05-14-2013 01:33 PM

05-14-2013 01:33 PM

Re: VSA design and performance

I could run 2 pNIC's trunked on the vSwitch that the VSA vNIC uses, but I would need to use up 4 pNIC's to do this because I have 2910al's which don't stack, and for redundancy of networking, I need to have links into each switch. I happen to have 2 pNIC's unused in each server, so not a big deal, but I'd probably just drop in a 10GbE card and do direct connect and call it the day. More bandwidth, less work, less to go wrong.

I am married to VMware. I've used it since 2004 as a whole and we are invested in it, so no reason to throw the investment away. It would be a massive undertaking to convert to Hyper-V anyway, with no real benefit IMO.

VMware's VSA is an option but it's appears to be less featureful than HP. It doesn't scale beyond 3 hosts, so if I wanted to go t a 4th host that's not possible. VMware VSA is lockstep on writes, it doesn't acknowledge the write until it's written to 2 places. Does HP do the same or will it acknowledge it after the initial write? I'm not sure how VMware VSA handles reads. HP VSA reads across all nodes, but can't find anything that says what VMware does. Ultimately, I think VMware's VSA is really designed for very small environments, so scalabilty into real medium size isn't there I think. The LeftHands tuff is designed to get more into medium space IMO. I think VMware will be expanding VSA capability over time, since software defined storage is the big buzzword this year, and it makes sense for them to control you storage layer virtually, and let you run whatever you want, from small through enterprise deployments. WIth 10GigE and hypervisor hosts that can hold a bunch of disks that normally sit empty, it kind of all makes sense across the board, regardless of deployment size. That will rub their technology partners (SAN manufacturers) the wrong way, but I can see it coming. Anyway, that's off topic...

Starwind does need to run on Windows, and unless they can run on Windows inside a VM And still access the storage they need, it rules them out entirely as I'm looking to use local disk in my ESXi hosts, not adding dedicated storage hosts. That would leave StorMagic as the only other option, but they also appear to be less featureful then HP. For example, StorMagic doesn't indicate that they have any VAAI support. On the flip side, not 10TB limits with StorMagic, which makes their sizing of large storage, and licensing, much more cost effectice.

Given the recent refresh to G8 hardware and the new StoreVirtual branding, I think HP will continue to develop the LeftHand platform. Unless you want to go to StoreAll and 3PAR ($$$$$) their small/medium offerings are all traditional SAN/NAS, which isn't inline with the push to software defined storage. Keeping the LeftHand line alive and well lets them cover the range of prices. But, who knows in the end.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

05-14-2013 07:24 PM

05-14-2013 07:24 PM

Re: VSA design and performance

oh I really do hope HP keeps developing their storevirtual product line as at one time it was groundbreaking and I know it still could be in the future.

I'm tied to Hyper-V so i can't say I know all the features HP VSAs have with esx, but I think the read performance isn't AS good on esx as it is w/ the HP DSM for microsoft. I think I read/heard that it only reads/writes through one VSA per connection, but I'm not sure there. I know the manual does clearly spell it out so you can read up on that before you make a decision.

HP does the same with write confimations (not until its written to both nodes for NR10). This is enterprise data we are talking about :) safety always first. For the money, you really can't beat the HP VSA for safety and availability.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

05-15-2013 08:22 AM

05-15-2013 08:22 AM

Re: VSA design and performance

What oikjn said is true regarding how the VMware hosts comminicate with the virtual SAN. Any given volume is accessed through a single VSA called the gateway connection, or as of v10.5, the active connection. So in theory, a write issued to the virtual SAN goes to the gateway VSA, and then may have to be issued to two other VSAs in the cluster, assuming the gateway isn't hosting the appropriate stripe. How wrirtes are actualy handled doesn't appear to be documented anywhere.

The VSAs can generate heavy amounts of network traffic. If you have the resources to separate them on their own vSwitch (pnics) it's a good idea. I had latency issues with my iSCSI traffic sharing a vSwitch with the VSA.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

05-22-2013 10:02 AM

05-22-2013 10:02 AM

Re: VSA design and performance

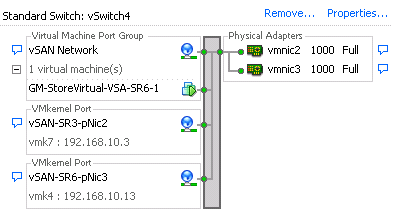

Regarding splitting out the VM from the iSCSI are you guys using both eth0 and eth1 on the vsa for this? I'm feeling that I'm missing something here. I've disabled eth1 and have the VSA and the vmkernel ports on the same vswitch. Are you suggesting I change this? If so I assume they'd be on the same subnet? Here is a screen cap of my current vSwitch. Any pointers greatly appreciated!

Chris

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

08-15-2013 09:14 AM

08-15-2013 09:14 AM

Re: VSA design and performance

From my practice I would go with active/standby uplink config for VSA, it's more stable.

Also, almost in any case you won't get more than 1Gb. It is by design (1 working i/f at VSA, ESX iSCSI initiator going through 1 link, VMware load balancing algorithm etc).

Also remember that you will have replication traffic between VSAs (but 1Gb full duplex helps).

But this is virtual appliance. I thing 1Gb is quite ok for its normal tasks. Even 100Mb looks like to work )

Also I doubt you will have much more from 10Gb uplink. Taking 10Gb bandwidth from VM without root password and tuning is quite complex :).

I've written some notes on VSA here , probably will be useful ..

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

08-23-2013 05:42 AM

08-23-2013 05:42 AM

Re: VSA design and performance

MY question is if you have two nodes, plus a 3rd for quorum, with esxi does the 3third node ever take requests and relay them? If so that would be a serious choke point since it has no data.

If esxi would only access two nodes, then vm's running on the same host as the VSA would have a 100% read hit rate locally but have to write to both nodes.?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

08-23-2013 08:00 AM

08-23-2013 08:00 AM

Re: VSA design and performance

assuming your third node is a FOM.... no it will not have any data requests from your initiators. Only nodes INSIDE the cluster will handle iSCSI requests and only for the LUNs inside that cluster.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

08-29-2013 04:06 AM

08-29-2013 04:06 AM

Re: VSA design and performance

so two nodes would be faster with a vm on a VSA node since you have a 100% chance of reading all data from localhost and 100% chance of writing all data to localhost (but have to way for 2nd host to ACK write).

I'd be curious how this works out compared to using more nodes where the remote data percentage would go way up?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

08-29-2013 10:34 PM

08-29-2013 10:34 PM

Re: VSA design and performance

PS

To assign points on this post? Click the white Thumbs up below!