- Community Home

- >

- Storage

- >

- HPE Nimble Storage

- >

- Array Performance and Data Protection

- >

- Re: Can replication go faster?

Categories

Company

Local Language

Forums

Discussions

- Integrity Servers

- Server Clustering

- HPE NonStop Compute

- HPE Apollo Systems

- High Performance Computing

Knowledge Base

Forums

- Data Protection and Retention

- Entry Storage Systems

- Legacy

- Midrange and Enterprise Storage

- Storage Networking

- HPE Nimble Storage

Discussions

Knowledge Base

Forums

Discussions

- Cloud Mentoring and Education

- Software - General

- HPE OneView

- HPE Ezmeral Software platform

- HPE OpsRamp Software

Knowledge Base

Discussions

Forums

Discussions

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Community

Resources

Forums

Blogs

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

07-05-2017 08:38 AM

07-05-2017 08:38 AM

Can replication go faster?

Is there any way to speed Nimble replication? It seems there is a relatively hard limit of ~1000Mb/s. I assume replication is a very low priority task to be sure to not impact production traffic, but even in our off hours, replication never gets much past 1000Mb/s and this coming from an AF7000 that can certainly push some IOPS. We don't have any replication QoS configured.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

07-12-2017 05:40 AM

07-12-2017 05:40 AM

Re: Can replication go faster?

I haven't run into any replication issues with my deployments but I'm curious to hear the follow up to your question - did you reach out to Nimble Support to see if there is something that could be causing the bandwidth ceiling you're noticing?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

07-12-2017 06:08 AM

07-12-2017 06:08 AM

Re: Can replication go faster?

I did open a ticket. They pulled a core dump to see what the deal is. However, I am seeing this same ceiling on all of our 8 or 10 arrays all on 3.7 code. We just upgraded the dev/test array to 3.8.0.1 and the SFAs to 4.3... so I am not sure if we'll maybe see an improvement, but the release notes don't indicate there are any replication fixes. We also have a volume on a CS5000 that will only replicate at like 200MB/s when other volumes on the array replicate at that mystery 1000Mb/s ceiling. It's all somewhat strange.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

07-12-2017 06:15 AM

07-12-2017 06:15 AM

Re: Can replication go faster?

I should also mention we have AFs, which I would expect to replicate very quickly... but nope. Same ~800-1000MB/s ceiling.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

07-12-2017 06:17 AM

07-12-2017 06:17 AM

Re: Can replication go faster?

With synchronous replication on the horizon I would imagine any throughput bottlenecks within NOS or controllers will be actively addressed. I know Nimble has plenty of service providers that accept replication points from customers so I'm hopeful they'll be able to point you in the right direction on how to exceed the cap. Thank you for the update!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

07-13-2017 07:14 AM

07-13-2017 07:14 AM

Re: Can replication go faster?

It doesn't specify in the specs, but are the management ports 1 GbE? Usually replication is configured to run through those ports. That would definitely limit your replication speed if they are.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

07-13-2017 07:25 AM

07-13-2017 07:25 AM

Re: Can replication go faster?

Thank you for posting this question. I am glad you have opened the support case, Nimble Storage Support should be able to provide you with the answer to your question using the Support data and statistics coming from arrays.

Without seeing your statistics, i would like to at least provide some guidance regarding your question.

Yes, there are system resource constraints and design limitations in replication engine. The majority of that particular scenario occurs when the data is randomly written on the RAID, making the replication message read requests take more time, even from SSD'd. While the "slow blocks" are retrieved, any other problem (network congestion or packet loss) which could exist will degrade performance even more since the messages will not be sent out at the right time (or have to be re-transmitted) and system cannot continue moving through to next blocks until data in TCP buffer moves to network.

For your particular situation, seeing your statement about 1000Mbps being the "ceiling" on multiple arrays makes me wonder if there is some network path which is taken across 1Gbps switch. I have seen replication perform as fast as 230MiBps (~1.9Gbps) on the 10Gbps interface card when the data was laid out favorably on the RAID with multiple volumes replicating at once. When you state that many of your arrays are "capped" at 1000 Mbps, I cannot imagine that all of them have exactly same data structure on RAID. I would imagine that the peak and average replication performance will be different from array to array. I would continue working with Nimble Storage Support and seek to identify the common point between all your arrays which limits the bandwidth to 1Gbps.

Usually, to maximize replication bandwidth, the best solution is to replicate as many of volumes as possible for a good mix of the data streams. With Nimble OS 3.x and 4.x the system will replicate as much as 8 volumes at once, allowing it to obtain blocks of data to replicate from all at once (some will be slower than others) and push it on the network. However, I do not think it will be possible to obtain 800MiBps (6.7Gbps) with current replication implementation even with perfectly laid out sequential blocks in each RAID stripe and lack of any other contention on the system or the network.

On the non-AFA platforms, with Nimble OS 3.x or above it is best to replicate more frequently, such as every 15 minutes after initial seeding was completed. This should pull the data from the SSD's instead of the HDD's, which would increase the read speed of the blocks to replicate and thus, increase the bandwidth used for replication. Take care not to coincide the snapshots (take snapshots at different times of the day), to guarantee there will be enough resources to complete all snapshot jobs before replication.

I hope the information i have provided is useful and will lead you to the answer to your original question. If possible, please post the final resolution and the isolation from Support here.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

07-13-2017 07:44 AM

07-13-2017 07:44 AM

Re: Can replication go faster?

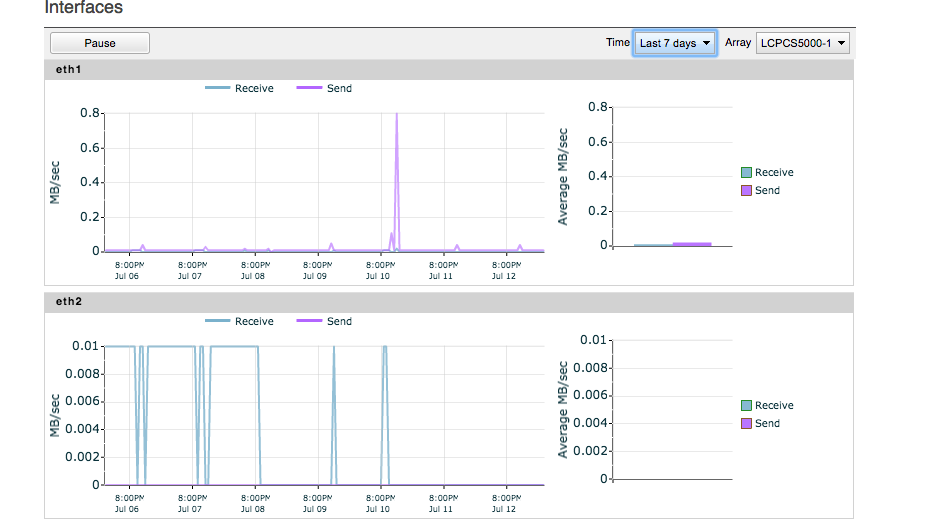

Our management ports are indeed 1G, but I a certain that this traffic is going over 10G. See below. The configuration as well as interface stats support 10G all the way. Additionally, the arrays all live on an isolated 10G flat switching fabric, so there are no 1G hops anywhere.

Regarding streams and such, we are replicating ~40 volumes out of 80 in the group, so I would expect that to be a reasonable mix.

Thanks for all of the insight. I'll keep poking with engineering.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

07-13-2017 07:51 AM

07-13-2017 07:51 AM

Re: Can replication go faster?

Guess it couldn't be simple. This came immediately to mind as we just moved our replication traffic around. Not for the speed (we're limited to 100 Mb connection to our DR site), but so we could put the replication traffic on a different subnet and route it over a different connection than normal daily traffic.

Good luck getting this resolved and seeing faster speeds.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

07-13-2017 08:13 AM

07-13-2017 08:13 AM

Re: Can replication go faster?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

07-13-2017 08:33 AM

07-13-2017 08:33 AM

Re: Can replication go faster?

I do know for a fact one of the volumes is a special pig that has been trying to replicate about 5Tb for ~9 days now. The Labs data bear that out (very cool BTW, didn't know that was there).

I put it in a Google Sheet for the morbidly curious: Replication Timeline - Google Sheets

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

07-13-2017 01:50 PM

07-13-2017 01:50 PM

Re: Can replication go faster?

As I was reading through this thread and something stood out to me in the screen shot taken of the replication interfaces. They are labeled Eth1 and Eth2. These are the onboard interfaces, not generally used for data traffic. I will assume you are using Eth1 for management only. Is the second onboard interface Eth2 configured for management failover, dedicated to replication traffic, or is it running iSCSI connections? You mentioned that management is connected at 1Gb. Is Eth2 connected to that same switch, or is connected to your 10G iSCSI switch?

I work at HPE

HPE Support Center offers support for your HPE services and products when and how you need it. Get started with HPE Support Center today.

[Any personal opinions expressed are mine, and not official statements on behalf of Hewlett Packard Enterprise]

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

07-14-2017 09:46 AM

07-14-2017 09:46 AM

Re: Can replication go faster?

Eth2 is management failover on a 1G OOB network. I am super thoroughly sure that there is no replication traffic going over any 1G link.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

07-18-2017 07:51 AM

07-18-2017 07:51 AM

Re: Can replication go faster?

I actually opened a ticket on this last week. Our current setup is 2 AF 5000's replicating over a 300Mbps fiber link with Riverbed WAN optimizers on both ends. Monitoring through the Nimble replication monitor shows an average transfer of around 200Mbps with peaks up to 400Mbps. But when monitoring from the routers and WAN optimizers its only peaking at 65Mbps. So I wanted to push the envelope and see if we could get more out of our 300Mbps line (like our other brand arrays do that start the an "N"). No such luck, as I was told that the issue is with the limited replication streams the Nimble arrays have built into the replication engine code which is 8 streams. Our other "N" brand array will do 25 streams and why we see it utilize our line more.

I was told it is on someones plate to work on the replication engine code to allow for more streams but there is no ETA on it.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

07-18-2017 11:55 AM

07-18-2017 11:55 AM

Re: Can replication go faster?

Thank you for the reply. 300Mbps is a low amount of bandwidth for any Nimble Storage array to fill using 8 streams (volumes) replicating at once.

There might be some confusion of Mbps and MiBps. 1MiBps = ~8.4Mbps. So using the math you have provided, 400Mbps = ~47MiBps. Academically, MebiByte is 1024 KebiBytes, however everyone in computer industry is used to thinking that MegaByte is 1024x1024, which it is not academically. Yet, all Operating Systems are now reporting storage in "Mega" while using base 2 (1024). (Simple confirmation is to look at the "Bytes" value of the "Page" file of your Windows PC - it will be base 2^x). This should explain why you see peak bandwidth on the array as number 400 while path device is 8 times less at the same time.

In regards to the replication streams; each stream is a volume snapshot which is being replicated. In version Nimble OS 3.x, there are 8 streams, as you point out. The reason why there are multiple streams is to allow system to read enough data from the disks to saturate the network link during the variety of customized scheduling. Each volume's snapshot has it's own challenge of reading data from disks, since it needs to find the blocks to replicate. However, this challenge comes into play with a lot higher bandwidth, such as 2Gbps and usually on Hybrid arrays, where HDD spindles are involved. Regardless of how randomly data laid out on RAID on the AFA's, 8 streams is definitely enough to saturate the link of 300Mbps.

All 8 streams are replicating over a TCP session. TCP session is dependent on the reliability of the network path. I believe the other "N" might be using multiple TCP sessions (regardless of streams). In a non-optimal network path, that would make the that replication to utilize more of the bandwidth from the advertised network link speed. Non-optimal network path does not just mean there are network errors or physical device issues. It can mean that there is other traffic (congestion) or some kind of filtering is involved (Proxy, Firewall, IPS/IDS, Optimizer). There have been quite few cases where a device, meant to improve some matter of network transmission (Proxy, Firewall, IPS/IDS, Optimizer) have interfered with the TCP transmission to the point where it cannot saturate the link. I cannot say this is the case for your situation, but what i can say is that Nimble Storage array replicating compressed data blocks, thus, there is no reason to add a "WAN optimizer" on a path.

What i would suggest in your situation, if possible, is to bypass the Riverbed device completely (not just set a "bypass" rule, but not route through it at all) as a test. And after you pause/resume replication (this re-initiates TCP session) you might be able to see the asked 300Mbps while there is no congestion (like from the "N" device). If not, then network packet captures are in order, which would help determine if there is something else on the path (look for retransmits, out-of-order packets, TCP resets, etc).

In the case of original poster, 1Gbps limit seems to be kind of interesting. I hope Nimble Storage Support will provide some guidance of why it appears limited to this specific value.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

07-18-2017 12:03 PM

07-18-2017 12:03 PM

Re: Can replication go faster?

There is no Riverbed device... we don;t own any Riverbed at all. This is all on a single flat VLAN. I am not confusing MB vs MB vs MiB. As you can see form the graphs above, this is indeed pushing Mb.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

07-18-2017 12:09 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

07-18-2017 01:52 PM

07-18-2017 01:52 PM

Re: Can replication go faster?

See graphs below as they are all measured in Mbps for the last 24 hours...

Nimble Replication Monitor showing peaks around 300Mbps....

Application stats from Riverbed

*NOTE Port 4214 is Nimble Replication, Port 11105 is the "N" array

Riverbed does reduce Nimble replication traffic by over 77%! So we are reluctant to just bypass it...

Netflow graph of WAN line traffic

NOTE: "N" array replicates from 23:00~03:00. Nimble AF replicates every 4 hours

As you can see the 4 hour Nimble snaps that replicate are only using about 1/6th of the line.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

07-18-2017 02:46 PM

07-18-2017 02:46 PM

Re: Can replication go faster?

In respect to original poster, if further conversation is required, i would ask to post another thread where your particular issue can be addressed.

I can see that Riverbed and Nimble are both reporting in Mbps, which is good. The question is, why is Riverbed reporting much smaller peak then Nimble? Where is the data going? In order for the GUI graph in Nimble Storage array to show value, the replication buffer needs to be confirmed to have sent the data, which means TCP buffer was purged, which means that something has acknowledged that the downstream group has received the data.

From further research: Riverbed is only reporting the amount of traffic after it has "deduped" or further "optimized" the data. From Riverbed: "RiOS collects application statistics for all data transmitted out of the WAN and primary interfaces and commits samples every 5 minutes." (SteelHead EX Management Console User’s Guide)

Looking at the "per flow statistics" from Riverbed, i believe it shows that i was trying to explain, Nimble array is sending maximum it can do with a single TCP session; "N" device has a very low throughput per flow, however just very many flows to achieve the peak. On average Nimble actually sends more data (23Mbps). Riverbed reports data after the "optimization", so it looks like the data from "N" is not as "optimizable" because it is different. But in order to be fair in comparison between, lets say "N" device and Nimble Storage array, one would have to set a clean lab environment with completely identical workloads and network paths, not to mention the TCP congestion control algorithms, scaling factors, etc.

I understand you are reluctant to remove Riverbed from the path due to reduction rate, and you may operate as you prefer. There are considerations of WAN limits from ISP on data sent, etc. I would, however, do it at least temporarily to test what changes it brings. If you are interested in further pursuing this matter, please post a new thread and we can move the conversation there.

Sorry for hijacking the thread! I do hope the information provided is good information for anyone stumbling upon this thread.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

07-18-2017 02:58 PM

07-18-2017 02:58 PM

Re: Can replication go faster?

You do not have to remove the Riverbed appliance from the path to get the results you are looking for. An exception can be configured in the pair of Riverbed appliances allowing replication traffic from the arrays to pass through un-optimized. It's been quite awhile since I have done this, so I don't remember exactly where it is configured. I think it was part of the inline optimization rules. I do remember it was pretty easy to configure though.

I work at HPE

HPE Support Center offers support for your HPE services and products when and how you need it. Get started with HPE Support Center today.

[Any personal opinions expressed are mine, and not official statements on behalf of Hewlett Packard Enterprise]

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

07-18-2017 03:03 PM

07-18-2017 03:03 PM

Re: Can replication go faster?

@jonathan

In perfect world, yes. However it has been my experience that at times, a device could "hold on to" its old rule. In fact, we have seen firewalls unable to release TCP session until reboot. So to be cautious for a proper test, i have suggested to re-route around it.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

07-30-2017 08:09 AM

07-30-2017 08:09 AM

Re: Can replication go faster?

Stay tuned to this space. We escalated this and it appears there is an issue in 4.x code on SFA arrays. The rate limits are being applied incorrectly causing issues with internal scheduling thereby over throttling garbage collection and deletes. My assumption is replication is also potentially affected. I should be getting the bug description and RCA soonish as engineering just met on this this past Friday.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

09-14-2017 09:04 PM

09-14-2017 09:04 PM

Re: Can replication go faster?

Yes. Replication can go faster.