- Community Home

- >

- Storage

- >

- HPE Nimble Storage

- >

- Array Setup and Networking

- >

- MPIO or iSCSI MC/S (MCS)

Categories

Company

Local Language

Forums

Discussions

- Integrity Servers

- Server Clustering

- HPE NonStop Compute

- HPE Apollo Systems

- High Performance Computing

Knowledge Base

Forums

- Data Protection and Retention

- Entry Storage Systems

- Legacy

- Midrange and Enterprise Storage

- Storage Networking

- HPE Nimble Storage

Discussions

Knowledge Base

Forums

Discussions

- Cloud Mentoring and Education

- Software - General

- HPE OneView

- HPE Ezmeral Software platform

- HPE OpsRamp Software

Knowledge Base

Discussions

Forums

Discussions

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Community

Resources

Forums

Blogs

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

08-23-2013 10:30 AM

08-23-2013 10:30 AM

Since we will be iSCSI only, do you think MC/S would be a better option to connect our File Server to a nimble volume?

Solved! Go to Solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

08-28-2013 04:13 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

08-29-2013 02:14 AM

08-29-2013 02:14 AM

Re: MPIO or iSCSI MC/S (MCS)

Sean - I'd also refer to the excellent Windows MPIO setup script that Adam Herbert wrote - this will setup all the MPIO settings and paths for you

Check it out here:

Automate Windows iSCSI Connections

Cheers

Rich

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

08-29-2013 02:36 AM

08-29-2013 02:36 AM

Re: MPIO or iSCSI MC/S (MCS)

I too would use MPIO rather than MCS. You must, must make sure that you are multipathing correctly (using all paths). Your switching setup will determine the number of paths.

The Nimble monitoring pages (monitor interfaces, in particular - if you can distinguish between normal activity & normal + SQL activity) can help you from the array side, and good ol' task manager can of course give you a steer.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

08-29-2013 06:39 AM

08-29-2013 06:39 AM

Re: MPIO or iSCSI MC/S (MCS)

Sean -

This is for our SQL servers specifically but may help you regarding your file servers if bandwidth and performance are critical... we have three 10GB NIC ports (across different NIC Cards) on both of my SQL cluster nodes, going to Arista switches. The connections themselves are split over two Arista switches for additional redundancy. 1 10GB port on each host is going to a different Arista for general network connectivity.

Each Nimble volume we present to our 2 node SQL cluster has 1 paths per portal/NIC IP so 3 paths per volume per host. 6 paths between both nodes per volume. .

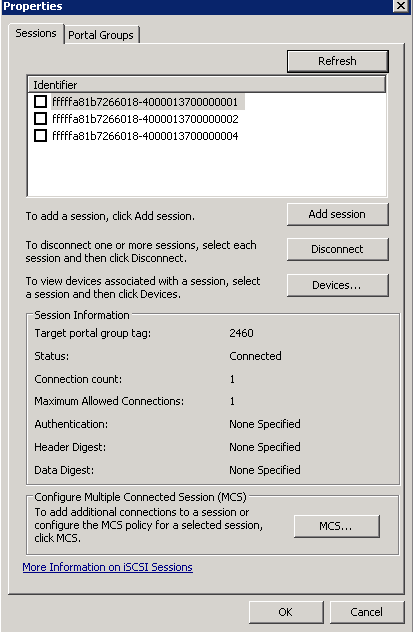

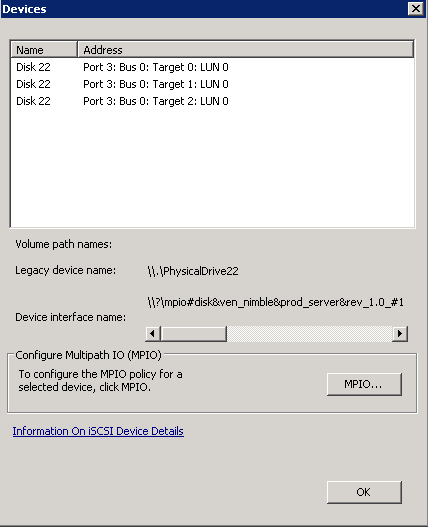

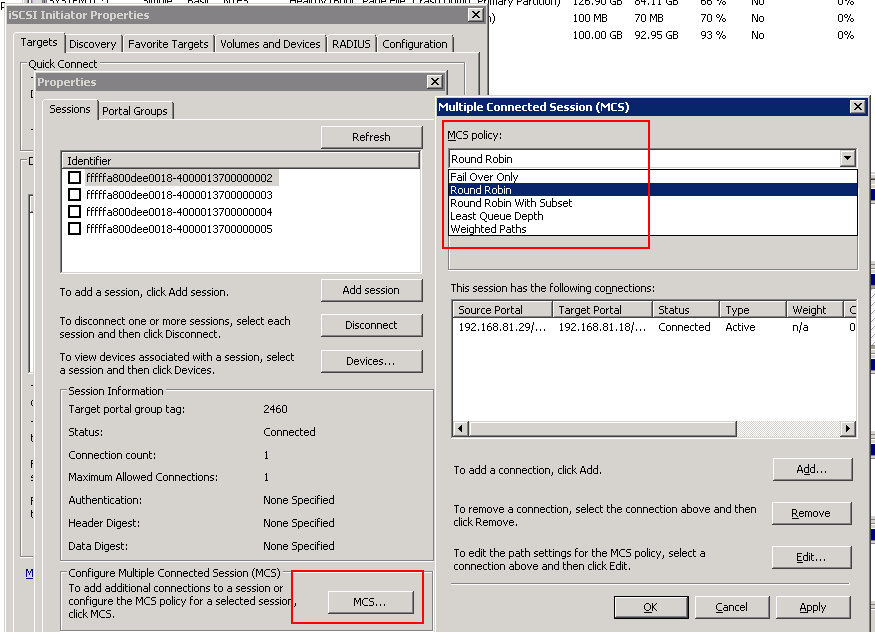

Once you setup MPIO ... at least in our setup, MC/S is also setup for each discovery portal session/NIC IP (See screenshot)

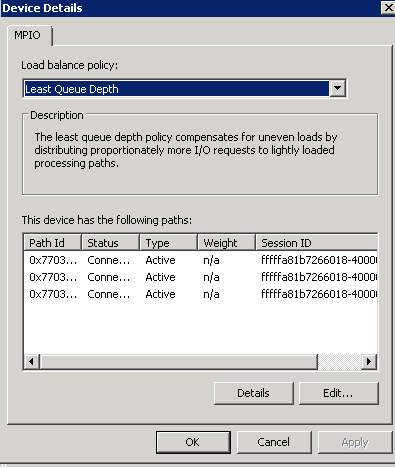

Here is what I configured. Under MPIO make sure you have multiple paths, switch to least queue depth as your performance policy, and under MC/s I would switch from round robin to least queue depth as well.

Here are some screenshots.. 3 paths/sessions per volume ... The second shot is the devices tab.. you should see three volumes 1 for each session etc... The third shot is the devices tab / MPIO settings. Least Queue Depth should yield your best "load balanced" policy. I also changed the MCS portal sessions to least queue depth as well (under the MCS setting under the sessions tab, first screenshot).

Hope this rambling helps in some way. If you have any questions let me know.

FYI for SQL on NIMBLE we are seeing anywhere between 24,000 and f 50,000 IOPS for sequential writes compared to our old HP EVA SAN which yielded about 2,000 - 9,000 IOPS. Even though this setup may be a little overkill for most... on our OLTP system (AX) redundancy and performance are absolutely critical.

JD

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

10-10-2013 11:19 AM

10-10-2013 11:19 AM

Re: MPIO or iSCSI MC/S (MCS)

Unfortunately The Script is also connecting the Volumes we use to house the VMs. However, I cannot get MPIO to function correctly outside of the Script. It only see the single session outside the Script, over the Multiple paths.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

10-10-2013 11:24 AM

10-10-2013 11:24 AM

Re: MPIO or iSCSI MC/S (MCS)

Sean, you should limit access via initiator groups on the array. That will keep this connections from being made to the wrong volumes.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

10-10-2013 11:24 AM

10-10-2013 11:24 AM

Re: MPIO or iSCSI MC/S (MCS)

Sean, you should limit access via initiator groups on the array. That will keep this connections from being made to the wrong volumes.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

10-10-2013 11:30 AM

10-10-2013 11:30 AM

Re: MPIO or iSCSI MC/S (MCS)

I have done this, but for some reason all of the Datastores created to HOST VMs also show up, when only the ESXi host have access. However I was able to get only the DS setup for the SQLTest by : $_.Target -match "com.nimblestorage:sqltest".

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

10-10-2013 11:32 AM

10-10-2013 11:32 AM

Re: MPIO or iSCSI MC/S (MCS)

Immediately after posting I realized that I created these DS using the Nimble vSphere plugin. Which does not setup Volume Restrictions. I have added the ESXi group to the VM Datastores.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

10-21-2013 06:05 AM

10-21-2013 06:05 AM

Re: MPIO or iSCSI MC/S (MCS)

Hi All

I have just worked my way through this process, moving away from a single iSCSI connection to a MPIO environment. Just to be helpful, I wrote the process up on my Blog site (including the references to the Nimble Support articles here: http://www.talking-it.com/2013/storage/nimble/windows-2008-r2-enhancing-the-nimble-iscsi-connection/

Hope this helps someone.

Nick Furnell

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

12-05-2013 11:12 AM

12-05-2013 11:12 AM

Re: MPIO or iSCSI MC/S (MCS)

This MPIO vs. MCS issue is really confusing me. What I *thought* I understood is that MPIO and MCS both basically performed the same functions, but in a different manner. Then my thinking morphed into MCS as being equivalent to NIC teaming/port bonding, to use aggregation and increase throughput, while MPIO is intended for path redundancy, but sans the port/bandwidth aggregation for improved throughput. To me, it seems logical to want to use and have the benefits of both - maximized throughput via MCS and redundancy via MPIO. But the more I read, the less clear I am because of inconsistent information from different sources.

Some resources imply that MPIO and MCS are mutually exclusive and can't/don't need to be used simultaneously, while others imply they can be used together. Examples:

MPIO and MCS under Windows – Configuration in a Nutshell | moodjbow

Geek of All Trades: iSCSI Is the Perfect Fit for Small Environments | TechNet Magazine

Cosonok's IT Blog: Thoughts on Whether to Use Windows Server 2008 MCS or MPIO for iSCSI

I also opened a case with Nimble support (00147105) and they too verified that I don't need to make changes to MCS because it's not even used/supported by Nimble. Since the TechNet article above stated "in order to use MCS, your storage device must support its protocol", I'd assumed MCS was not needed.

Then a different Nimble tech confused things when he told me "our best practices recommend using MPIO over MCS...it appears that we do not support MCS at the moment", which seems even MORE confusing because it both says to configure MPIO "over MCS", and then says they don't even support MCS. So why is it a 'best practice' to configure something that is not even supported by the vendor?

However, John posted above to make setting changes to *both* MPIO and MCS. If only MPIO is used, and MPIO is what Nimble recommends, then what is the point of wasting time making changes to MCS settings? Someone marked his post as helpful so that must mean that he is on the right track by configuring *both* MPIO and MCS, right?

Also, page 49 of the Nimble WIT setup doc says to set up LQD for MPIO. But they said nothing re. MCS, which is also RR by default. Should that also be changed to LQD, as John's indicated above? Because I cannot find any Nimble documentation on MCS, and their own support techs are giving me inconsistent answers, I'm hoping that someone here may know. Seems to me that MCS can be safely ignored but this post has only further added to my lack of clarity on this issue. OTOH if I have paid for multiple 10Gb links, it'd be really great if I could make *full* use out of them by 'bonding' them into an aggregate 20Gb link, while also having the failover capability back to 10Gb if one were to fail. That's how NIC port teaming works and I'd assumed this was also possible with iSCSI but so far, I have found any clear explanation on this matter.

Thank you in advance to anyone who can clear this up.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

12-13-2013 05:59 AM

12-13-2013 05:59 AM

Re: MPIO or iSCSI MC/S (MCS)

Dan, Perhaps the comment "MPIO over MCS" was mis-interpreted and both techs were stating the same thing.. The second tech you spoke to meant to imply that Nimble Storage Best practices are to implement MPIO vs MCS. The choices for multipath are mutually exclusive.