- Community Home

- >

- Storage

- >

- Around the Storage Block

- >

- Enable high-performance Ethernet storage through S...

Categories

Company

Local Language

Forums

Discussions

Knowledge Base

Forums

- Data Protection and Retention

- Entry Storage Systems

- Legacy

- Midrange and Enterprise Storage

- Storage Networking

- HPE Nimble Storage

Discussions

Knowledge Base

Forums

Discussions

- Cloud Mentoring and Education

- Software - General

- HPE OneView

- HPE Ezmeral Software platform

- HPE OpsRamp

Knowledge Base

Discussions

Forums

Discussions

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Community

Resources

Forums

Blogs

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Receive email notifications

- Printer Friendly Page

- Report Inappropriate Content

Enable high-performance Ethernet storage through StoreFabric M-Series Ethernet switch family

Learn how data center networks built on HPE StoreFabric M-Series provide an ideal Ethernet Storage Fabric (ESF) optimized to deliver the highest levels of performance, industry-best latency and zero packet loss.

The result? Increasing demand for an efficient, reliable, storage-aware, high-performance storage network connecting servers and storage. Recent innovations in Ethernet switches are addressing these demands—and keeping pace with new storage trends such as the growing adoption of cloud and object storage.

It's here: true Ethernet Storage Fabric

HPE StoreFabric M-Series Ethernet switches introduce the first true Ethernet Storage Fabric (ESF), allowing you to build a converged network capable of simultaneously handling compute and storage traffic. This Ethernet Storage Fabric supports converged and hyperconverged architectures with block, object and file storage. It supports storage connectivity from iSCSI, to traditional arrays, to the newest NVMe over Fabrics arrays. Optimized for storage networking, dynamically shared buffers are designed to handle bursty storage traffic, low latency and predictable performance to maximize data delivery and scale out network architecture—all crucial attributes for today’s business-critical storage environments.

Additionally, ESF provides support for storage offloads, such as RDMA, to free resources and increase performance. Not only are the M-series Ethernet switches specifically optimized for storage, but they also provide better value than traditional enterprise storage networking. Simply put, faster storage requires faster networks and the M-Series delivers just that.

Changes in the storage market

To say the storage market has undergone rapid change is an understatement. If you look at the traditional storage market of 10 years ago, we could agree that these statements were generally true:

- All storage was based on spinning disk.1

- High-performance storage was block-accessed, connected via Fibre Channel.

- All enterprise storage was purchased as dedicated, integrated appliances from storage vendors.

- Cloud storage was not significant.

- Fibre Channel was faster than Ethernet.

Today’s market is much different—with faster storage demands more bandwidth. Increasing use of flash has dramatically raised the storage performance benchmark, where a single NVMe SSD can saturate two or more 10Gb/s links and all-flash arrays routinely require multiple high-bandwidth interfaces to achieve full performance. The latency of storage devices and arrays has fallen by a factor of 5x to 50x, in some cases as low as 100us (100 microseconds) instead of several milliseconds, and continues to drop. Driven by multimedia, virtualization, technical computing, Artificial Intelligence and the Internet of Things, the bandwidth available on servers and required by workloads has rapidly increased as well. For example, editing and production of a 4k video require as much as 20Gb/s to handle one uncompressed stream.

In addition, most storage capacity growth is in file, object and hyperconverged infrastructure (HCI) instead of only in block, with analysts reporting that up to 80% of storage capacity is in the “secondary storage” category.2 This can include online backups, snapshots, replicas, test/development copies and archive data. The use of analytics is driving the growth of distributed, file and object storage to support cloud, big data, and machine learning. Many organizations are now deploying software-defined storage, which can be used as primary or secondary storage but is typically scale-out and is more likely to be used for file/object/HCI storage than for block storage. Scale-out and hyperconverged storage typically generate a lot of “east-west” traffic between server nodes and usually require Ethernet networks and a redesign of the network architecture.

The growth of cloud—both public and private (on-premises) cloud—has a tremendous effect on the storage market. Large cloud service providers are buying and offering an increasing percentage of storage and generally use Ethernet as a single, converged network technology to connect all compute and storage. For all practical purposes, no Fibre Channel (FC) is in the cloud.3 This is driving the adoption and use of Ethernet-connected storage both from vendors and in large enterprises who copy cloud architectures in order to achieve cloud-like cost savings and flexibility. Cloud storage growth also promotes the use of file and object storage which is deployed on Ethernet-based networks, often using a scale-out and software-defined storage paradigm.

Rise of the Ethernet Storage Fabric

Ethernet technology has changed rapidly over the last few years, supporting new speeds and new features. The new speeds of 25, 40, 50 and 100GbE are being adopted very rapidly by servers, flash storage arrays, and in the cloud. Sales of high-speed Ethernet ports are projected to grow robustly over the next several years, and all major server vendors are already shipping 25, 40, and 100GbE adapters for their servers. Ethernet offers three times more bandwidth than Fibre Channel (100Gb/s vs. 32Gb/s) at lower latency and cost.

Storage switches must support lossless technology to ensure data delivery, Quality of Service (QoS) and advanced congestion control mechanisms, along with sophisticated telemetry, monitoring, and management tools. The high volume of Ethernet port shipments and a large number of competing vendors are driving a rapid advancement in Ethernet technology as well as falling prices. This in turn makes Ethernet networks a viable and cost-effective option for interconnecting storage.

Changes mentioned above in the storage market are driving a shift towards Ethernet to provide Storage connectivity. Ethernet offers more performance, lower pricing, and more flexibility than FC, which can be used only for block storage and its price typically precludes its use for secondary storage such as backup, data mining replicas, test/dev copies or archives. Very large customers don’t want to pay for new expanded or duplicate storage networks built on expensive hardware, as they would still need to build an Ethernet network for compute, management and HCI traffic. Similarly, smaller organizations don’t want to pay high FC prices for new storage networks. What’s more, they frequently don’t have onsite expertise.

Most new storage deployments on cloud and/or HCI are using Ethernet connectivity and the trends indicate an increasing percentage of networked storage will use Ethernet going forward. So while existing FC SANs and InfiniBand fabrics will generally remain in place for legacy applications running block storage, companies both large and small are looking to build their new storage networks on more flexible, cost-effective Ethernet storage fabrics.

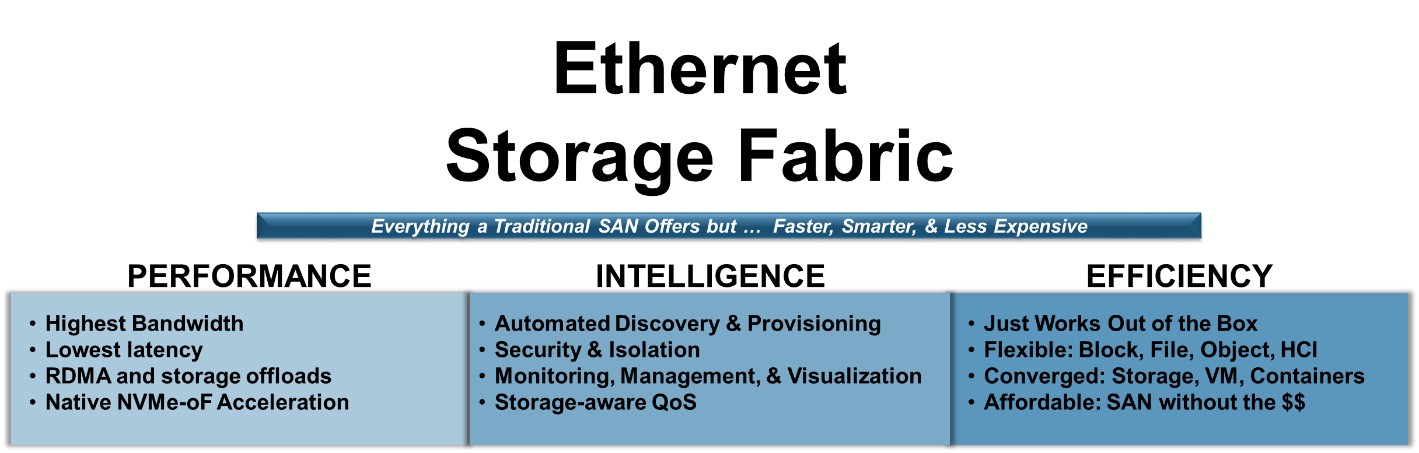

Deeper dive: Ethernet Storage Fabric (ESF)

The ideal switch for storage would incorporate support for all storage types: file, block and object—and include support for HCI and Cloud infrastructure and software-defined storage. Similarly, it should maintain high-bandwidth at low latency, ensure delivery of data and support new media types such as NVMe. Ethernet is ideal to implement a true unified, converged fabric that can simultaneously support compute, communications, and storage traffic efficiently and cost-effectively.

The rise of HCI, such as HPE SimpliVity, is a great example of the efficiency, ease of deployment, and scalability that is capable when using a converged fabric. Combining your entire infrastructure into one simple, yet flexible fabric allows for reduced cost and complexity of your IT environment while delivering the bandwidth and reliability hyperconvergence requires. The true power of hyperconvergence comes from full consolidation of software and hardware which is made possible by deploying on a unified, purpose-built converged network infrastructure capable of supporting the demanding requirements of today’s high-performance storage. This is what is referred to as an Ethernet Storage Fabric. New switches dedicated to storage and HCI clusters should provide the following characteristics to be considered an Ethernet Storage Fabric:

- High-performance—including high bandwidth, non-blocking, low-latency support for compute, communications and storage traffic

- Storage fabric intelligence—being able to understand, manage and monitor storage traffic, as well as integrate with storage and network management tools

- Efficiency—including port density, power usage efficiency, use and price flexibility

In addition, Ethernet storage switches should be easy to purchase, scale, upgrade and support, as well as backed by a trustworthy vendor with global reach and strong service offerings.

Figure 2: Ethernet Storage Fabric characteristics

HPE M-Series Ethernet Switch: Ideal for Ethernet Storage Fabrics

The new HPE StoreFabric M-Series is the first storage-optimized Ethernet switch capable of providing an Ethernet Storage Fabric (ESF). It offers the highest performance measured in both bandwidth and latency, supporting from 8-to-64 ports per switch with speeds from 1GbE to 100GbE per port. All the M-series models are non-blocking and allow enough uplink ports to build a fully non-blocking fabric. The latency is not only the lowest (300 nanoseconds port-to-port) of any generally available Ethernet switch, but the silicon and software are designed to keep latency consistently low across any mix of port speeds, port combinations, and packet sizes.

M-Series uses an intelligent buffer design to ensure buffer space is allocated to the ports that need it most. This ensures data I/O is treated fairly across all switch port combinations and packet sizes. Other switch designs typically segregate their buffer space into port groups which makes them up to 4x more likely to overflow and lose packets during a traffic microburst. This buffer segregation can also lead to unfair performance where different ports exhibit wildly different performance under load despite being rated for the same speed.

The M-Series family have features specific to optimizing current and future storage networking. These include support for Data Center Bridging (DCB), including DCBx, Enhanced Transmission Specification (ETS) and Priority Flow Control (PFC). iSCSI traffic can be specifically classified and prioritized using iSCSI-TLV. The switches are designed to integrate with storage and network management tools as well as run a container on the switch to provide storage specific services.

Storage optimization in the HPE M-Series includes:

- Ability to expand & attach beyond the primary array

- Designed to support HPE 3PAR, Nimble, Simplivty and MSA storage arrays, as well as HPE storage partners Qumulo, Scality and Cohesity

- DCB, including DCBx, ETS, and PFC

- ISCSI-TLV

- Support for all Ethernet speeds including 1, 10, 25, 40, 50 and 100GbE

- Lowest latency of any mainstream Ethernet switch

- Differentiated storage ports with ports that can be locked down for storage traffic.

- The highest levels of predictable performance

- Zero avoidable packet loss regardless of packet size

- Performance fairness across any combination of ports

- Shared smart buffer

- Smart cut-through, allowing rapid packet forwarding even with mixed speeds

Put your storage on the fast track with HPE StoreFabric M-Series Ethernet switches and an Ethernet Storage Fabric

Storage networks built on M-Series provide an ideal Ethernet Storage Fabric (ESF) optimized to deliver the highest levels of performance, industry-best latency and zero packet loss, plus unique storage-aware features and form factors. By leveraging ESF, it’s possible to build a converged network capable of simultaneously handling compute and storage traffic plus iSCSI storage networking, as well as support for file, block, object and the latest NVMe over fabric storage.

Being optimized for storage networking—including dynamically shared buffers and low latency—and offering predictable performance makes the M-Series ideal for storage environments.

Future-proof your storage environment today with support for faster speeds and new protocols like NVME over Fabrics, plus the ability to add storage services by either running them on the switches or integrating with the Switch OS.

Learn more now

Meet Around the Storage Block blogger Faisal Hanif, Product Management, HPE Storage and Big Data. Faisal is part of HPE’s Storage & Big Data business group leading Product Management & Marketing for next generation products and solutions for storage connectivity, network automation & orchestration. Follow Faisal on Twitter @ffhanif

1 Flash as cache was introduced around 2008, for example the Fusion-io ioDrive was first shown in September 2007, and the NetApp “PAM” flash cache module was launched in June 2008. The first Pure Storage all-flash array was released in August 2011.

2 Reference Wikibon and whatever study the Scality folks quoted

3 Some public clouds are built on InfiniBand and a few small clouds use Fibre Channel, but none of the hyperscalers or large Web 2.0 vendors use Fibre Channel for hosted data.

- Back to Blog

- Newer Article

- Older Article

- haniff on: High-performance, low-latency networks for edge an...

- StorageExperts on: Configure vSphere Metro Storage Cluster with HPE N...

- haniff on: Need for speed and efficiency from high performanc...

- haniff on: Efficient networking for HPE’s Alletra cloud-nativ...

- CalvinZito on: What’s new in HPE SimpliVity 4.1.0

- MichaelMattsson on: HPE CSI Driver for Kubernetes v1.4.0 with expanded...

- StorageExperts on: HPE Nimble Storage dHCI Intelligent 1-Click Update...

- ORielly on: Power Loss at the Edge? Protect Your Data with New...

- viraj h on: HPE Primera Storage celebrates one year!

- Ron Dharma on: Introducing Language Bindings for HPE SimpliVity R...