- Community Home

- >

- Storage

- >

- Around the Storage Block

- >

- Reclaiming disk space with VMware vSphere and HPE ...

Categories

Company

Local Language

Forums

Discussions

Forums

- Data Protection and Retention

- Entry Storage Systems

- Legacy

- Midrange and Enterprise Storage

- Storage Networking

- HPE Nimble Storage

Discussions

Forums

Discussions

Discussions

Forums

Discussions

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

- BladeSystem Infrastructure and Application Solutions

- Appliance Servers

- Alpha Servers

- BackOffice Products

- Internet Products

- HPE 9000 and HPE e3000 Servers

- Networking

- Netservers

- Secure OS Software for Linux

- Server Management (Insight Manager 7)

- Windows Server 2003

- Operating System - Tru64 Unix

- ProLiant Deployment and Provisioning

- Linux-Based Community / Regional

- Microsoft System Center Integration

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Community

Resources

Forums

Blogs

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Receive email notifications

- Printer Friendly Page

- Report Inappropriate Content

Reclaiming disk space with VMware vSphere and HPE storage

Learn how reclaiming disk space with VMware vSphere UNMAP and HPE 3PAR and Nimble Storage allows you to make the most efficient use of your storage capacity.

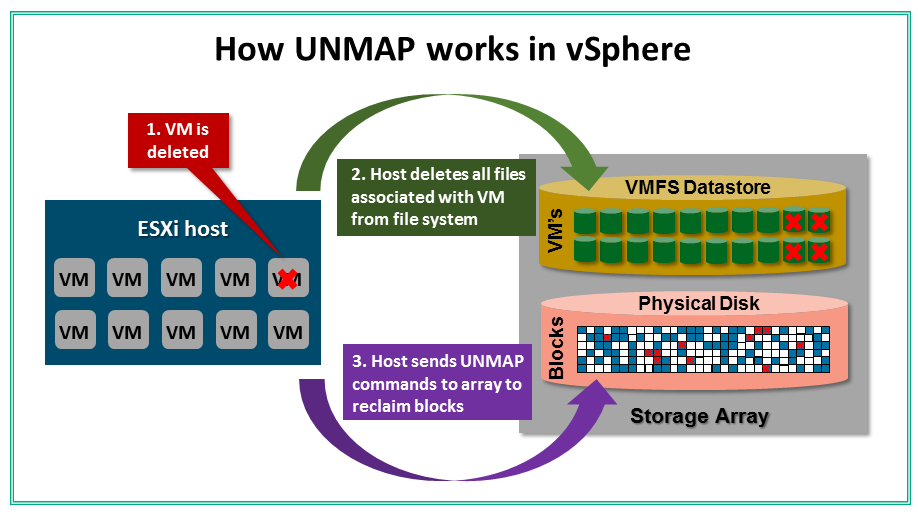

So why can’t a storage array can’t just reclaim space on its own without having to be told to do it? The reason is storage arrays are unaware of what is happening inside a file system whether it is a Windows file system or a VMFS data store, essentially they have no visibility into what is happening within a LUN. As a result, they have no knowledge of when files or VMs are deleted or what disk blocks they reside on. Because of this, whatever is managing the file system (i.e. Windows or ESXi) has to tell the storage array when deleted data can be reclaimed.

How vSphere reclaims space on a storage array

Reclaiming space is important. vSphere offers several storage operations that will result in data being deleted on a storage array. These operations include performing a Storage vMotion where a VM is moved from one data store to another, when VM snapshots are deleted and when VMs are deleted. Of these operations, Storage vMotion has the biggest impact on thin provisioning because whole virtual disks are moving between disks which results in a lot of wasted space that cannot be reclaimed. In addition, when data is deleted within a VM that is thin provisioned, data can be reclaimed as the guest OS can send UNMAP commands that are passed on to the storage array.

Performing an UNMAP of disk blocks can be a resource intensive operation on the storage array and because of this the way the behavior of the UNMAP function has changed across vSphere releases since its introduction as part of vSphere 5.0. UNMAP initially started off being a synchronous operation meaning disk blocks were reclaimed in real time as soon as data was deleted in vSphere. Due to some issues that were uncovered pretty quickly the UNMAP process turned into a manual process that had to be initiated via CLI commands starting with vSphere 5.0 U1 through vSphere 6.0. The manual process worked but it was resource intensive and time consuming as well as inefficient as instead of being aware of what space needed to be reclaimed it created a large balloon file and tried to reclaim everything it could.

Finally, in vSphere 6.5, the UNMAP process became automatic again, although this time as an asynchronous operation meaning disks blocks are not reclaimed in real time but as a background process at a fixed low rate (25 MBps). This was a welcome and long-awaited change as vSphere was finally telling the storage array exactly which disk blocks to reclaim just doing it at a slow pace. In vSphere 6.7 this was made even better by allowing a user configurable reclamation rate to be set to control how fast vSphere tells the storage array which disk blocks to reclaim. The reclamation rate can be set in the vSphere client from 100 MBps-to-2000 MBps. However, unless you are in a real hurry to get storage capacity back, it’s recommended to keep the rate on the low side to lessen the impact of the UNMAP operation on your VM workloads. You can also disable space reclamation completely if you have plenty of disk space and don’t want any resource impact from running UNMAP.

One key thing to note is that doing an UNMAP at the VM level (not guest OS level) is only applicable when using VMFS data stores. With VMware’s new Virtual Volumes (VVols) storage architecture doing an UNMAP at the VM level is not needed as a storage array if fully aware of which disk blocks a VM resides on as VM’s are written natively to a storage array without a file system. This is one big advantage of VVols, vSphere no longer has to tell the host what disk blocks to UNMAP as the array has full awareness already and can reclaim space at whatever rate it wants to. With VVols a storage array becomes much more efficient as all provisioning and reclamation operations are performed dynamically.

Whether you are using VMFS or VVols in guest space, reclamation can still be done for guest OSs that support it to allow for more granular space reclamation. This is necessary because vSphere has no visibility within a guest OS file system to know what files have been deleted. If the guest OS sends UNMAP commands vSphere just passes them on for the storage array to process them and reclaim space.

Where HPE storage fits into this reclamation picture

Being able to reclaim disk space allows you to make the most efficient use of your storage capacity to help lessen the need to add more. We fully support space reclamation on both HPE 3PAR StoreServ and HPE Nimble storage arrays. In fact, with 3PAR we were one of the very first partners to support UNMAP on day one of its initial release as part of vSphere 5.0 more than seven years ago.

At HPE, we have had a long tradition of supporting all the VMware integration areas right away. That continues to this day with our industry-leading support for VVols as a VMware design partner. Whether it’s UNMAP, VVols or plug-ins for VMware, HPE 3PAR and Nimble are ideal storage platforms that feature modern architectures that boost VMware ROI by enabling you to optimize your virtual infrastructure, simplify storage administration and maximize virtualization savings.

More great VMware-related content

Blogs: Check out our other posts here on Around the Storage block talking about VMware topics, including VVols and vSphere.

Webinar: Want storage for VMware to be easier? Register for A Farewell to LUNs—Discover how VVols forever changes storage in vSphere to learn how. The webinar takes place live on October 23, or you can catch the on-demand replay anytime.

Looking ahead to VMword Barcelona: Get the scoop on what HPE’s got planned, where the only tame thing will be the IT Monster!

Around the Storage Block blogger Eric Siebert, Solutions Manager, HPE. On Twitter: @ericsiebert

- Back to Blog

- Newer Article

- Older Article

- Back to Blog

- Newer Article

- Older Article

- haniff on: High-performance, low-latency networks for edge an...

- StorageExperts on: Configure vSphere Metro Storage Cluster with HPE N...

- haniff on: Need for speed and efficiency from high performanc...

- haniff on: Efficient networking for HPE’s Alletra cloud-nativ...

- CalvinZito on: What’s new in HPE SimpliVity 4.1.0

- MichaelMattsson on: HPE CSI Driver for Kubernetes v1.4.0 with expanded...

- StorageExperts on: HPE Nimble Storage dHCI Intelligent 1-Click Update...

- ORielly on: Power Loss at the Edge? Protect Your Data with New...

- viraj h on: HPE Primera Storage celebrates one year!

- Ron Dharma on: Introducing Language Bindings for HPE SimpliVity R...