- Community Home

- >

- Storage

- >

- Around the Storage Block

- >

- HPE Nimble Storage Linux Toolkit 2.3: The Containe...

Categories

Company

Local Language

Forums

Discussions

Knowledge Base

Forums

- Data Protection and Retention

- Entry Storage Systems

- Legacy

- Midrange and Enterprise Storage

- Storage Networking

- HPE Nimble Storage

Discussions

Knowledge Base

Forums

Discussions

- Cloud Mentoring and Education

- Software - General

- HPE OneView

- HPE Ezmeral Software platform

- HPE OpsRamp

Knowledge Base

Discussions

Forums

Discussions

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Community

Resources

Forums

Blogs

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Receive email notifications

- Printer Friendly Page

- Report Inappropriate Content

HPE Nimble Storage Linux Toolkit 2.3: The Container Storage Platform

It’s been hectic the last few months seeing the myriad of activities that finally came together in powerhouse of a release. The HPE Nimble Storage Linux Toolkit (NLT) 2.3 is packed to the brim with features and capabilities to enable and sharpen very specific container centric use cases and broader platform support.

I spent the entirety of 2017 soliciting use cases, providing demos, presentations, showing early engineering prototypes to prospects, customers and partners. I for one can’t be more thrilled to see that everything come together in this release and how all the hard work everyone has put in paid off. This release includes most of the capabilities we’ve talked about over the last year in one form or another. Let’s pop the hood and explore how HPE Storage can support your next container project to become a glowing success!

Container orchestration

It’s no news to anyone that Kubernetes won in the subtle (and not so subtle) orchestration wars that have been brewing over the last few years. I must admit at this stage that I strongly believed in Docker Swarm and its ease of use would drive adoption much further than it did. Nevertheless, I’m thrilled to see Docker adopting Kubernetes. I’m part of the Docker UCP beta program and I must say that deploying multi-master production grade Kubernetes has never been simpler, period! Once Docker UCP 3.0 becomes GA you can expect to hear from us as we’ve already pre-validated our container storage platform for Kubernetes on Docker.

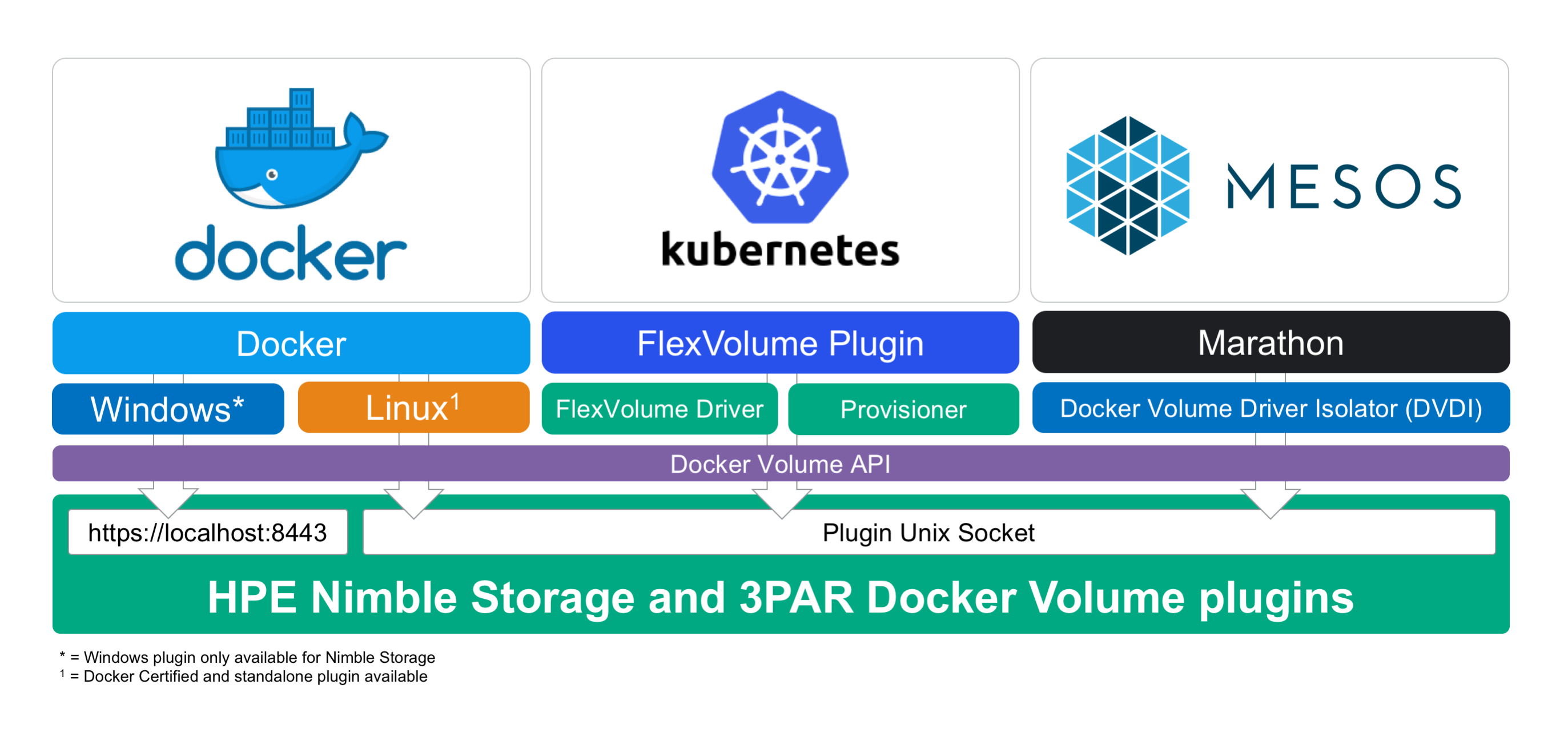

HPE Storage has substantial invested in the Docker Volume API. Our plugin has enough primitives to drive very advanced storage workflows. We’ve decoupled our Docker Volume plugin from the container runtime. This allows us to have an agnostic stance with regard to the big three container orchestrators, Docker Swarm, Kubernetes and Mesos Marathon.

Our Kubernetes integration is based off Dory, an open source project initiated by HPE Storage, to allow any 3rd party Docker Volume plugin to be used through the FlexVolume plugin interface provided by Kubernetes. Alongside the FlexVolume driver we also have an out-of-tree Dynamic Provisioner that implements the Kubernetes StorageClass resource which in turn allows ground breaking ease of use policy-based storage provisioning.

$ cat | kubectl create -f-

---

kind: StorageClass

apiVersion: storage.k8s.io/v1

metadata:

name: prodmariadb

provisioner: hpe.com/nimble

parameters:

description: "Prod StorageClass for Databases"

perfPolicy: MariaDB

protectionTemplate: Local48Hourly-Cloud64Daily

limitIOPS: "15000"

encryption: "True"

storageclass "prodmariadb" created

This creates a StorageClass resources which Persistent Volume Claims can be made against. Our provisioner is setup to listen on the hpe.com prefix and provision the Persistent Volume using the FlexVolume driver with the same name. Technically the provisioner calls the Docker Volume API directly but it uses the socket file associated with the FlexVolume driver configuration to determine which socket to use. Also, the parameter stanza is Docker Volume plugin specific and in this case we're using the HPE Nimble Storage Docker Volume plugin.

Users can now created Persistent Volume Claims against the StorageClass:

$ cat | kubectl create -f-

---

kind: PersistentVolumeClaim

apiVersion: v1

metadata:

name: mariadb-pvc

spec:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 256Gi

storageClassName: prodmariadb

persistentvolumeclaim "mariadb-pvc" created

We should now be able to inspect the Persistent Volume Claim and see if our request has been fullfilled:

$ kubectl get pvc/mariadb-pvc

NAME STATUS VOLUME CAPACITY ACCESS STORAGECLASS AGE

mariadb-pvc Bound prodmariadb-a8e3... 256Gi RWO prodmariadb 5s

With these simple steps we now have a 256GiB 15K IOPS volume optimized with our MariaDB Performance Policy and having automatic snapshots taken every hour retained for two days and replicating to HPE Cloud Volumes daily while retaining roughly two months of backups. In a nutshell - all the end-user need to care about is the volume size, everything else is taken care of automatically by the backing HPE Nimble Storage array. This is the pinnacle of policy-based storage provisioning for Kubernetes!

The provisioner also takes care of fulfilling the reclaimation policy, the default is to delete the Persistent Volume when the Persistent Volume Claim has been deleted.

We continue to be as vendor agnostic as possible when it comes to Kubernetes. Red Hat OpenShift, SUSE CAP, Kubernetes for Docker EE and upstream Kubernetes are just a few that we will be able to support moving forward.

Snapshots re-invented for container use cases

Any respectable storage system on the market today can create a point-in-time copy in the form of a snapshot of a volume or filesystem. It’s how those snapshots are being abstracted to applications and users that sets them apart.

We’ve introduced numerous new parameters and overloaded our cloning and importing capabilities in order to give users very fine-grained control of how to present data to the container. Since the Docker Volume API doesn’t have the capabilities yet to either update or snapshot a volume we’ve developed creative ways to enable users to leverage snapshots across multiple use cases.

If a volume has been created with a Protection Template, chances are that the template has a set of schedules attached to it and has a few snapshots created by the scheduler in NimbleOS. These snapshots are now enumerated in the “inspect” output:

# docker volume inspect myvol1 \

| json 0.Status.Snapshots 0.Status.VolumeCollection

[

{

"Name": "myvol1.kubernetes-Tight-2018-01-31::21:25:00.000",

"Time": "2018-02-01T05:25:00.000Z"

},

{

"Name": "myvol1.kubernetes-Tight-2018-01-31::21:30:00.000",

"Time": "2018-02-01T05:30:00.000Z"

},

{

"Name": "myvol1.kubernetes-Tight-2018-01-31::21:40:00.000",

"Time": "2018-02-01T05:40:00.000Z"

},

{

"Name": "myvol1.kubernetes-Tight-2018-01-31::21:35:00.000",

"Time": "2018-02-01T05:35:00.000Z"

},

{

"Name": "myvol1.kubernetes-Tight-2018-01-31::20:55:00.000",

"Time": "2018-02-01T04:55:00.000Z"

}

]

{

"Description": "",

"Name": "myvol1.kubernetes",

"Schedules": [

{

"Days": "all",

"Repeat Until": "23:59",

"Snapshot Every": "5 minutes",

"Snapshots To Retain": 384,

"Starting At": "00:00",

"Trigger": "regular"

}

]

}

Another snapshot use case that comes into play for manual testing and prototyping, which is extremely powerful for developers, is to revert to a known state of a certain dataset. Not far from the basic idea of a container, you run an image can be run that will be identical for each instantiation. However, carrying application data in containers by the terabyte is not very practical for a lot of reasons. Instead, depending on an external storage system to provide these data services is a much more elegant solution. Let’s see how this works (output filtered for brevity):

# docker volume create -d nimble \

-o cloneOf=myvol1 -o createSnapshot -o snapshot=mysnap1 \

--name myvol1-clone

myvol1-clone

# docker volume inspect myvol1 | json 0.Status.Snapshots. \

| json -a -c "this.Name=='mysnap1'"

{

"Name": "mysnap1",

"Time": "2018-02-01T03:35:50.000Z"

}

# docker volume inspect myvol1-clone | json 0.Status.ParentSnapshot

mysnap1

# docker volume rm myvol1-clone

myvol1-clone

# docker volume inspect myvol1 | json 0.Status.Snapshots. \

| json -a -c "this.Name=='mysnap1'"

{

"Name": "mysnap1",

"Time": "2018-02-01T03:35:50.000Z"

}

#

In this workflow we end up with a named snapshot on the source volume that the clone is dervied from. While this is desirable within the scope and timeframe this particular dataset is relevant to the developer, we also provide additional means for cleaning up the snapshot when removing all the clones depending on that particular snapshot:

# docker volume create -d nimble \

-o cloneOf=myvol1 -o createSnapshot -o snapshot=mysnap2 -o destroyOnRm \

--name myvol2-clone

myvol2-clone

# docker volume rm myvol2-clone

myvol2-clone

# docker volume inspect myvol1 | json 0.Status.Snapshots. \

| json -a -c "this.Name=='mysnap2'"

#

As we can see, the source snapshot was removed up removal of the clone. The parameter can also be used for importing volumes as clones and for standard volumes. Using the feature on standard volumes results in the volume being destroyed on the array instead of being offlined which is the default. This default protects your data and potential snapshots on the volume.

To expand on this concept even further, we’ve coined a concept called a “Ephemeral Clones”, which ties into the concept of CI/CD pipelines. Each build job get its own volume and when the container terminates, its clones automatically cleans up after themselves, including the snapshot if one was created.

# docker volume create -d nimble \

-o cloneOf=myvol1 -o destroyOnDetach \

--name myvol3-clone

myvol3-clone

# docker run --rm -v myvol3-clone:/data alpine df -h /data

Filesystem Size Used Available Use% Mounted on

/dev/mapper/mpathcf 10.0G 32.2M 10.0G 0% /data

# docker volume inspect myvol3-clone

[]

Error response from daemon: get myvol3-clone: Unable to find Docker volume using {"Name":"myvol3-clone"}.

#

In addition to developers, storage admins and IT operations will appreciate this feature as we’ve all seen the clutter snapshots and clones creates at scale are problematic. Tidying orphaned resources manually is very tedious and error-prone. Being explicit with the volume clone purpose and life-cycle at inception is a much cleaner approach than performing post-mortems.

These examples uses the intuitive Docker CLI to demonstrate our container platform capabilities - all the options are available to any orchestrator and container runtime!

Seamless data migration from VMware Virtual Disks

VMware vSphere is by far the most popular hypervisor in the Enterprise and well represented in our customer base. Running container infrastructure on top of vSphere makes a ton of sense as the compute instances are trivial to deploy and manage. Incorporating virtual machines creates an additional layer of security and isolation than our most commonly deployed container runtimes doesn’t provide today.

A popular use case is to modernize traditional applications running in full virtual machines by using containers. The challenge is that most traditional applications require stateful storage which typically brings a lot of uncertainty into the mix when the modernization process also includes data migration. Fear not, we have you covered!

A little bit of background before we dive in, VMware vSphere has a very powerful data migration tool built-in - VMware Storage vMotion. This allows administrators to seamlessly move Virtual Disks (VMDK files) around on different storage backends non-disruptively to re-balance storage resources or do a simple storage system refresh with zero downtime. What is neat with this technology is that it provides Virtual Disk granularity allowing application data disk migrations to a new storage backend.

Another great innovation from VMware is the concept of Virtual Volumes, more commonly known as VVols. VVols abstracts a protocol endpoint from an external storage system and plumbs the device directly into the virtual machine. This means without going into too much detail is that data is sent verbatim to from the guest operating system to the backend storage system without any offsets or translations. This means that the block device itself can be presented elsewhere with all the data intact. VVols is a fully supported backend by VMware Storage vMotion and once a Virtual Disk has been migrated to a HPE Nimble Storage VVol (from any foreign storage system or storage protocol) that is when your container platform can start interacting with the volume.

In this NLT release we’ve taken measures to ensure that a VMware VVols will both cutover and clone cleanly into a Docker Volume. I recorded a video of an example database migration a when we first talked about this feature:

The primary purpose of this integration is to relinquish control of the data that is captive in the virtual machine and access it through the Docker Volume API. It gives native access to the filesystem from the container and it provides the required mobility to integrate with a container orchestrator.

On the horizon

As we continue to work closely with our customers and partners we’ll continue to hone our capabilities further to simplify data management for containers. I can’t wait for the feature sets we’ll talk about in the coming year! One thing that is on everyone’s mind at the moment is the Container Storage Interface (CSI) and how that will impact implementation decisions. We can already see very early adoptions in both DC/OS and Kubernetes (as an alpha feature) and our engineering team is part of the SIGs (Special Interest Groups) for CSI and it will be a seamless transition for HPE Nimble Storage customers once they are ready to pull the trigger. We already have all the primitives locked and loaded. End-users such as application owners won't notice the impact as the point of abstraction is still the Persistent Volume Claims provisioned from a StorageClass resource.

Besides expecting broader platform support and incremental feature updates we’ll soon start expanding our container story with HPE Cloud Volumes. This is something that I’m really excited about and I hope to bring you a Tech Preview sooner rather than later of what’s currently cooking in engineering. As a backdrop, I blogged over at the HPE DEV community on how to get started with Docker Swarm on HPE Cloud Volumes and how to serve /var/lib/docker from a HPE Cloud Volume. Also, what might not be completely obvious is that we have the capability today to replicate your on-premises HPE Nimble Storage Docker Volumes to HPE Cloud Volumes and once the data is there… stay tuned!

Don’t hesitate to reach out to HPE on how to take advantage of the HPE Nimble Storage Linux Toolkit in your container project. We have the most comprehensive infrastructure portfolio on the market and we’re here to make you successful! HPE Nimble Storage customers can take advantage of the HPE Nimble Storage Linux Toolkit today by downloading it from HPE InfoSight!

- Back to Blog

- Newer Article

- Older Article

- haniff on: High-performance, low-latency networks for edge an...

- StorageExperts on: Configure vSphere Metro Storage Cluster with HPE N...

- haniff on: Need for speed and efficiency from high performanc...

- haniff on: Efficient networking for HPE’s Alletra cloud-nativ...

- CalvinZito on: What’s new in HPE SimpliVity 4.1.0

- MichaelMattsson on: HPE CSI Driver for Kubernetes v1.4.0 with expanded...

- StorageExperts on: HPE Nimble Storage dHCI Intelligent 1-Click Update...

- ORielly on: Power Loss at the Edge? Protect Your Data with New...

- viraj h on: HPE Primera Storage celebrates one year!

- Ron Dharma on: Introducing Language Bindings for HPE SimpliVity R...