- Community Home

- >

- Storage

- >

- Around the Storage Block

- >

- HPE CSI Driver for Kubernetes v1.4.0 with expanded...

Categories

Company

Local Language

Forums

Discussions

Forums

- Data Protection and Retention

- Entry Storage Systems

- Legacy

- Midrange and Enterprise Storage

- Storage Networking

- HPE Nimble Storage

Discussions

Forums

Discussions

Discussions

Forums

Discussions

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

- BladeSystem Infrastructure and Application Solutions

- Appliance Servers

- Alpha Servers

- BackOffice Products

- Internet Products

- HPE 9000 and HPE e3000 Servers

- Networking

- Netservers

- Secure OS Software for Linux

- Server Management (Insight Manager 7)

- Windows Server 2003

- Operating System - Tru64 Unix

- ProLiant Deployment and Provisioning

- Linux-Based Community / Regional

- Microsoft System Center Integration

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Community

Resources

Forums

Blogs

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Receive email notifications

- Printer Friendly Page

- Report Inappropriate Content

HPE CSI Driver for Kubernetes v1.4.0 with expanded ecosystem and capabilities now available!

Hewlett Packard Enterprise announces the immediate availability of HPE CSI Driver for Kubernetes v1.4.0. In this release HPE introduces two new Kubernetes CSI sidecar containers, expands ecosystem support, and further refines the CSI driver to take advantage of state-of-the-art features of the HPE primary storage portfolio.The vastly popular multi-writer functionality has now been marked ready for much-anticipated production use.

In this blog post we'll take a deep dive into the new capabilities, and elaborate on how to take advantage of HPE Nimble Storage and HPE Primera with Kubernetes.

Introducing volume and snapshot groups

In highly distributed workloads in micro service architectures, it's challenging to ensure data is protected without skew. Maintaining referential integrity between applications becomes even more important when breaking down monoliths that traditionally run as a single unit.

What is often referred to as "consistency groups" in other storage infrastructure management systems is available for Kubernetes using the HPE CSI Driver. With the introduction of two new Kubernetes CSI sidecar containers, administrators may create native Kubernetes API objects through the use of Custom Resource Definitions (CRD). This enables users to group Persistent Volume Claims (PVC) together to perform snapshots across the Volume Group using the standard Kubernetes API.

Essential for this capability is the previously introduced feature "CSI snapshots" in CSI driver v1.1.0. CSI Snapshots are explained in more detail in a previous Around the Storage Block blog post.

In the following screencast we'll discuss the various newly introduced API objects, and perform volume snapshots on a distributed application.

Volume Groups and Snapshot Groups are fundamental to the HPE strategy to help customers protect stateful workloads, running on Kubernetes, regardless of complexity. Stay tuned for a future Around the Storage Block blog on how to truly take advantage of these constructs.

Ecosystem expansion

Kubernetes is a rapidly growing ecosystem where many different distribution and support models exist. The HPE CSI Driver yet again expands the footprint by formally supporting the SUSE CaaS Platform. HPE and SUSE have a long-standing innovation partnership, and we're very excited to bring all the HPE primary storage portfolio to the SUSE CaaS Platform.

Other notable updates include HPE Ezmeral Container Platform 5.2, Rancher 2.5, Red Hat OpenShift 4.6, and upstream Kubernetes 1.20.

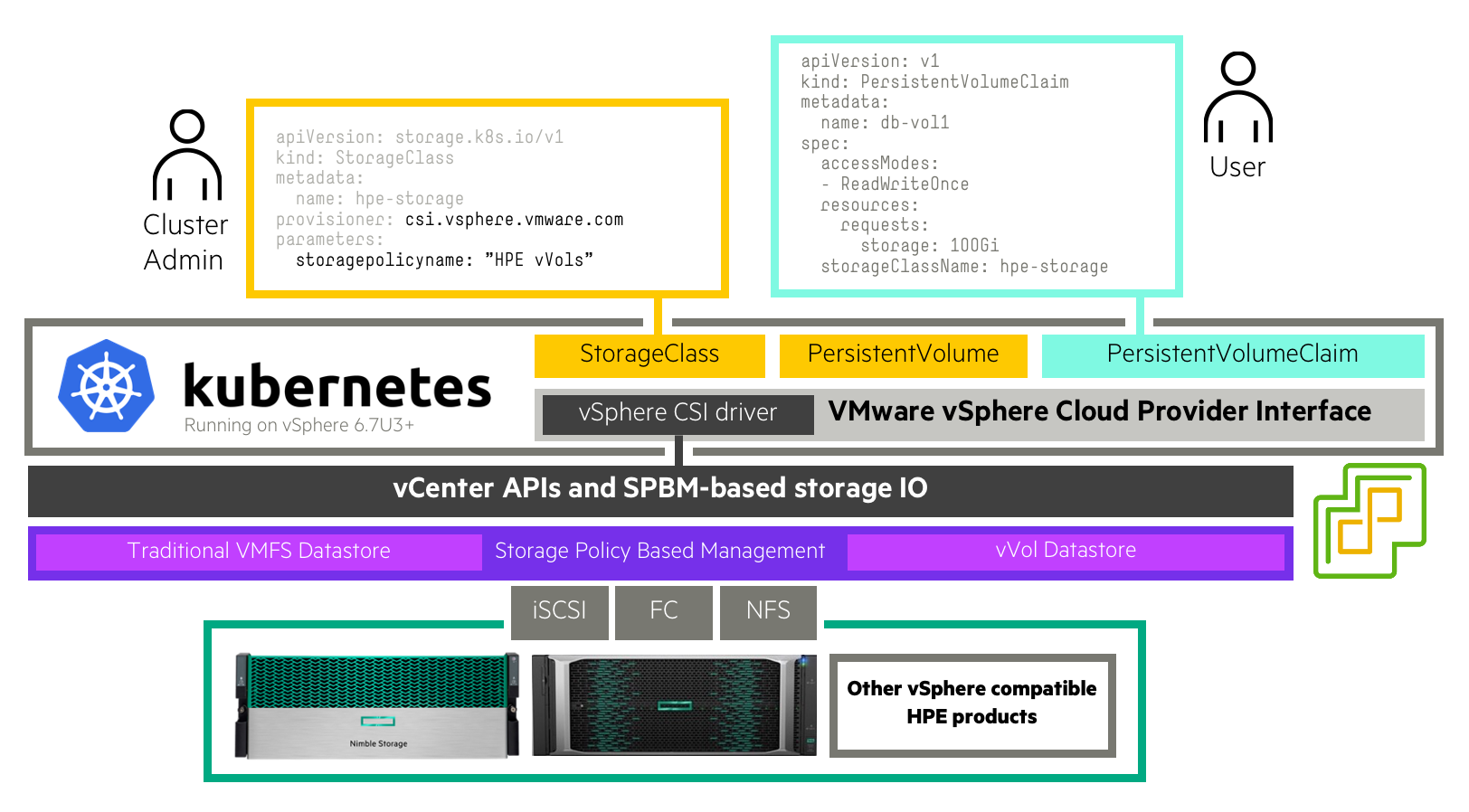

HPE receives questions about VMware Tanzu and running Kubernetes on vSphere on a regular basis, and how the HPEprimary storage portfolio maps to Tanzu. VMware vSphere has its own CSI driver, often referred to as vSphere CNS (Cloud Native Storage). This may use all popular storage abstractions in vSphere, such as vVols, VMFS and vSAN, to provide persistent storage to Kubernetes, independent upon whether it's Tanzu or any other Kubernetes distribution running on vSphere.

Since HPE is a significant technology partner with VMware, we've spent some time documenting how you would install and use vSphere CNS from scratch with HPE primary storage. The process involves using VMware vVols with either HPE Primera, HPE Nimble Storage, or HPE Nimble Storage dHCI.

At KubeCon late last year, HPE joined forces with Kasten by Veeam to provide unmatched data management capabilities using the HPE CSI Driver for Kubernetes and the Kasten K10 platform. As data management is an important area for production workloads deployed on Kubernetes, it's also important to offer choice – and HPE wants to ensure their entire partner ecosystem is represented. The HPE CSI Driver for Kubernetes is now supported by the Commvault intelligent data management platform for Kubernetes-native protection. HPE recently published "Data Protection for Kubernetes using Commvault". Since that initial publication the certification matrix has been updated to include the HPE CSI Driver for Kubernetes.

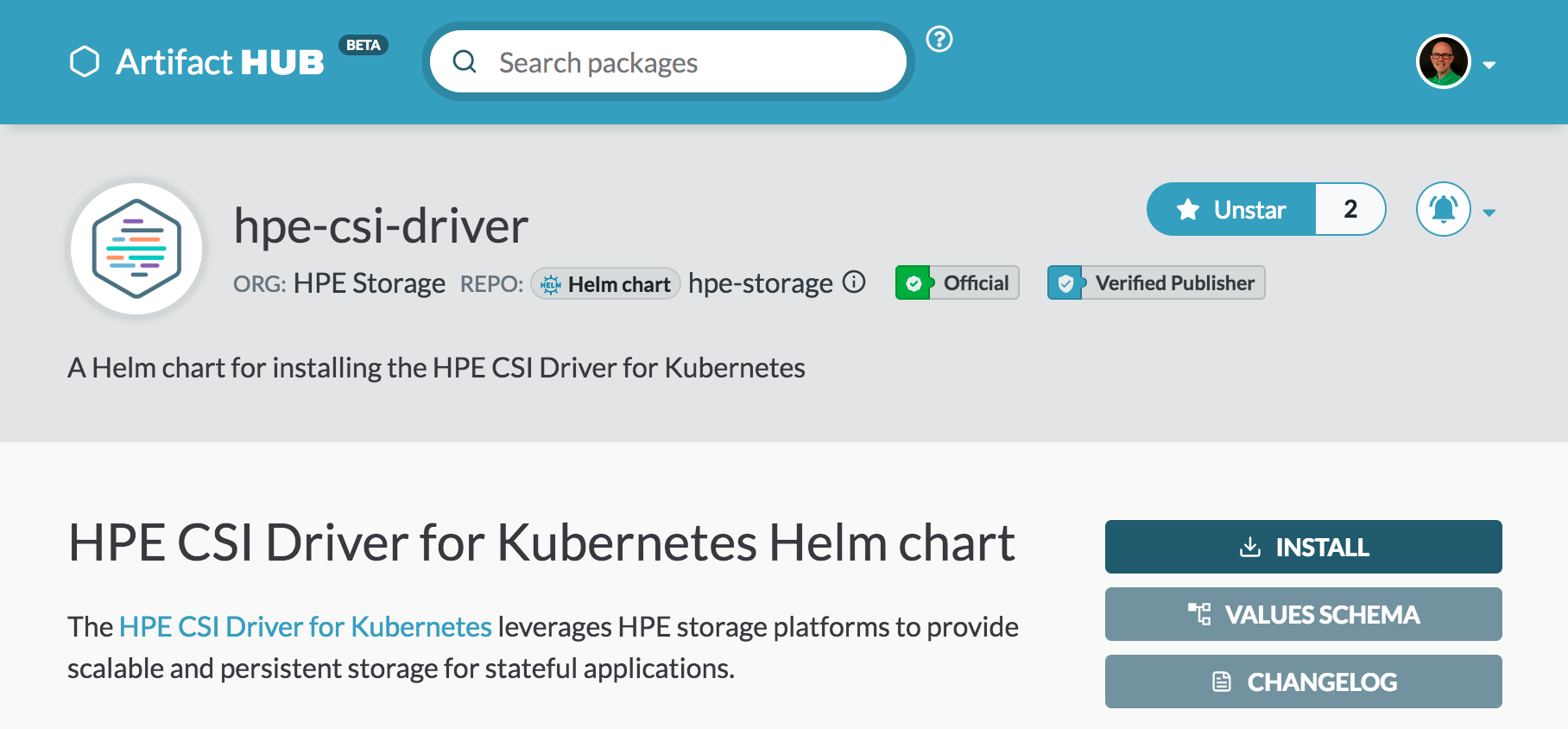

Helm chart and operator improvements

The HPE CSI Driver for Kubernetes Helm Chart is now represented on ArtifactHub.io. This new ecosystem marketplace gives users more confidence in what software they're installing on their Kubernetes clusters, as developers (such as HPE) may apply for additional decorations for their charts, such as being an official and verified publisher. Users may also sign up for notifications when their favorite charts receive an update. HPE's most diligent users got their notifications weeks in advance before this blog post was published that v1.4.0 had been released.

All container images needed to deploy the CSI driver are now pulled from Quay. With this change a new helm chart and operator parameter was introduced, named "registry". The registry parameter allows deployment of the CSI driver from a private registry. If the Kubernetes cluster is located in an air-gapped environment without connectivity to the outside world, the Helm chart may now be deployed completely offline. An example procedure has been documentened on the HPE Storage Container Orchestrator Documentation (SCOD) portal.

Multi-writer now production ready

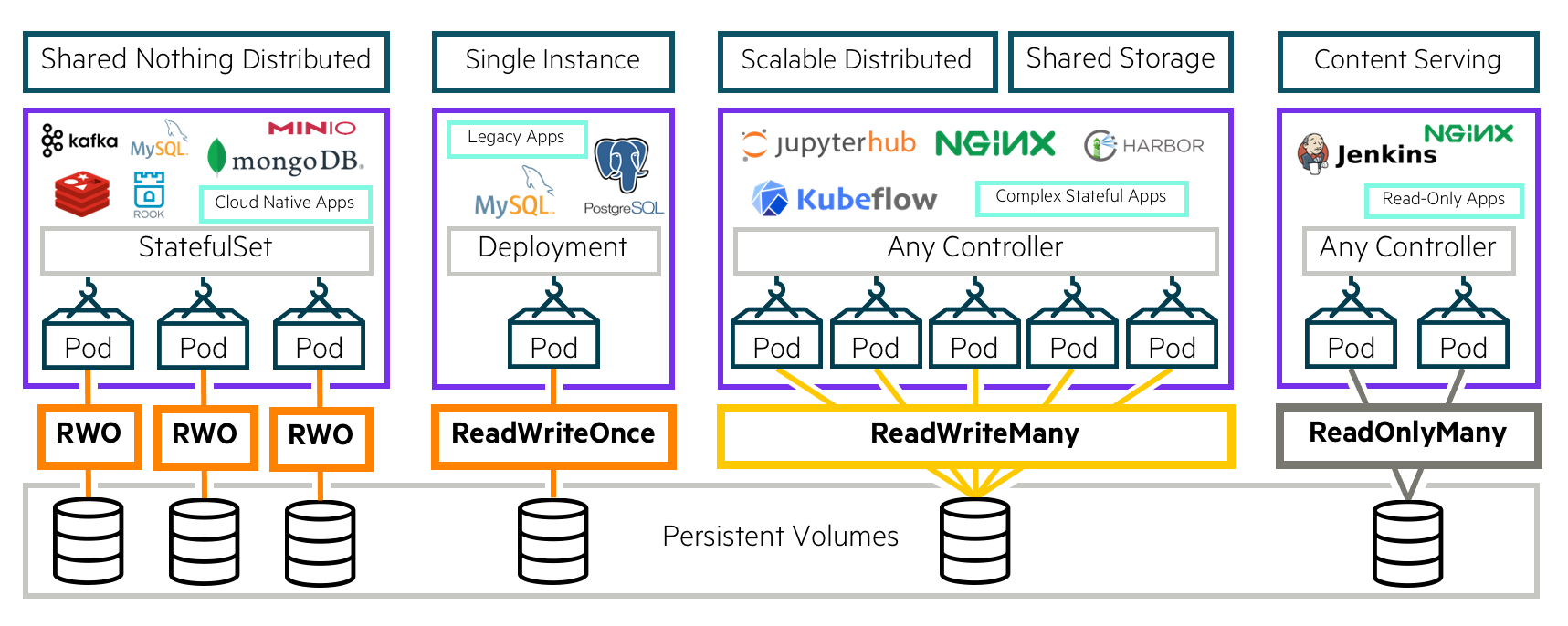

Since HPE CSI Driver for Kubernetes v1.2.0, multi-writer functionality has been available as a technology preview. This capability is now available for production workloads requiring "ReadWriteMany" persistent volume claims. Complete coverage of this feature is available in a previous blog post on Around the Storage Block.

A screencast demo has been created to demonstrate how Kubernetes administrators may configure Storage Classes to provision NFS resources for persistent volume claims.

Learn more about the NFS Server Provisioner in the documentation.

Many questions and inquiries have come up during the technology preview period. Let’s spend some time understanding what the NFS Server Provisioner is – and what it isn't.

The HPE CSI Driver is a "ReadWriteOnce" block storage CSI driver. To deliver a comprehensive user experience, a StorageClass may be configured to accept "ReadWriteMany" and "ReadOnlyMany" persistent volume claims for Kubernetes workloads.

NFSv4 is the implementation used to provide this functionality. While we have customers exposing the provisioned NFS exports outside the Kubernetes cluster, it was not designed to provide a generic NFS solution for workloads outside of Kubernetes, and do not provide any formal guidance on how to configure it.

It's also important to stress the use cases HPE is trying to cover with the multi-writer implementation. If the workload you're trying to satisfy is capable to be served from a "ReadWriteOnce" persistent volume claim, with all the performance and capacity constraints that comes with it, the workload is a candidate to run with "ReadWriteMany" persistent volume claims with multi-node access. That said, petabyte workloads with hundreds of gigabytes of throughput requirements should consider HPE Ezmeral Data Fabric (formerly MapR), or work with an HPE partner for a more suitable solution for your situation.

Peer persistence enhancements

Customers running mission-critical workloads on Kubernetes requiring site failover for disaster recovery (DR) may now utilize HPE Primera Peer Persistence with both FC and iSCSI. A comprehensive deep-dive on how to get started with Remote Copy Peer Persistence is available on the HPE developer community portal.

A screencast was recently published on how to perform the required configuration with a brief demonstration of a simulated site failure while monitoring a Kubernetes workload. You can view it below.

Ensure that you receive the latest details on supported configurations for Remote Copy Peer Persistence of the HPE Primera Container Storage Provider on SCOD.

Next steps

The HPE CSI Driver for Kubernetes v1.4.0 is available for production deployment immediately. Resources to get started or learn more is available below.

- Compatibility and Support on SCOD

- HPE CSI Driver for Kubernetes Helm Chart on ArtifactHub

- HPE CSI Operator for Kubernetes on Red Hat Ecosystem Catalog

- HPE CSI Driver source is code available on GitHub

- Explore how to use the Volume Groups and Snapshot Groups on hpedev.io or watch the screencast on YouTube

- Learn how to use the NFS Server Provisioner in the screencast or visit hpedev.io for a tutorial

- Configure Remote Copy Peer Persistence by either watching the screencast on YouTube or read the blog post on hpedev.io

New to Kubernetes and CSI? Head over to the HPE DEV Hack Shack and sign up for the interactive CSI workshop to learn all about the CSI primitives available in the HPE CSI Driver for Kubernetes. Also, HPE conducted a CSI tutorial at KubeCon last year which serve as a comprehensive introduction. It's available on YouTube.

Got questions? Sign up for the HPE DEV Slack community and fire away, we hang out in #kubernetes, #nimblestorage and #3par-primera

And – Make sure to stay tuned to Around the Storage Block for future updates to the container integrations for HPE primary storage!

- Back to Blog

- Newer Article

- Older Article

- Back to Blog

- Newer Article

- Older Article

- haniff on: High-performance, low-latency networks for edge an...

- StorageExperts on: Configure vSphere Metro Storage Cluster with HPE N...

- haniff on: Need for speed and efficiency from high performanc...

- haniff on: Efficient networking for HPE’s Alletra cloud-nativ...

- CalvinZito on: What’s new in HPE SimpliVity 4.1.0

- MichaelMattsson on: HPE CSI Driver for Kubernetes v1.4.0 with expanded...

- StorageExperts on: HPE Nimble Storage dHCI Intelligent 1-Click Update...

- ORielly on: Power Loss at the Edge? Protect Your Data with New...

- viraj h on: HPE Primera Storage celebrates one year!

- Ron Dharma on: Introducing Language Bindings for HPE SimpliVity R...