- Community Home

- >

- Storage

- >

- Around the Storage Block

- >

- The fastest ever all-in-one backup solution from H...

Categories

Company

Local Language

Forums

Discussions

Forums

- Data Protection and Retention

- Entry Storage Systems

- Legacy

- Midrange and Enterprise Storage

- Storage Networking

- HPE Nimble Storage

Discussions

Forums

Discussions

Discussions

Forums

Discussions

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

- BladeSystem Infrastructure and Application Solutions

- Appliance Servers

- Alpha Servers

- BackOffice Products

- Internet Products

- HPE 9000 and HPE e3000 Servers

- Networking

- Netservers

- Secure OS Software for Linux

- Server Management (Insight Manager 7)

- Windows Server 2003

- Operating System - Tru64 Unix

- ProLiant Deployment and Provisioning

- Linux-Based Community / Regional

- Microsoft System Center Integration

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Discussion Boards

Community

Resources

Forums

Blogs

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Receive email notifications

- Printer Friendly Page

- Report Inappropriate Content

The fastest ever all-in-one backup solution from HPE Storage and Veeam

HPE lab tests prove it: Veeam v11 is fast. Very fast. The latest Veeam Backup and Replication software release more than doubles the performance of the HPE Apollo and Veeam all-in-one data protection solution.

End-to-end backup speed is a key performance indicator that is often kept vague, but every user needs to know this metric to help them design and deploy successful data protection solutions. For that reason, my team ran a series of lab tests on the backup speed of the new solution, and I share the details here.

Architectural approaches to HPE and Veeam backup

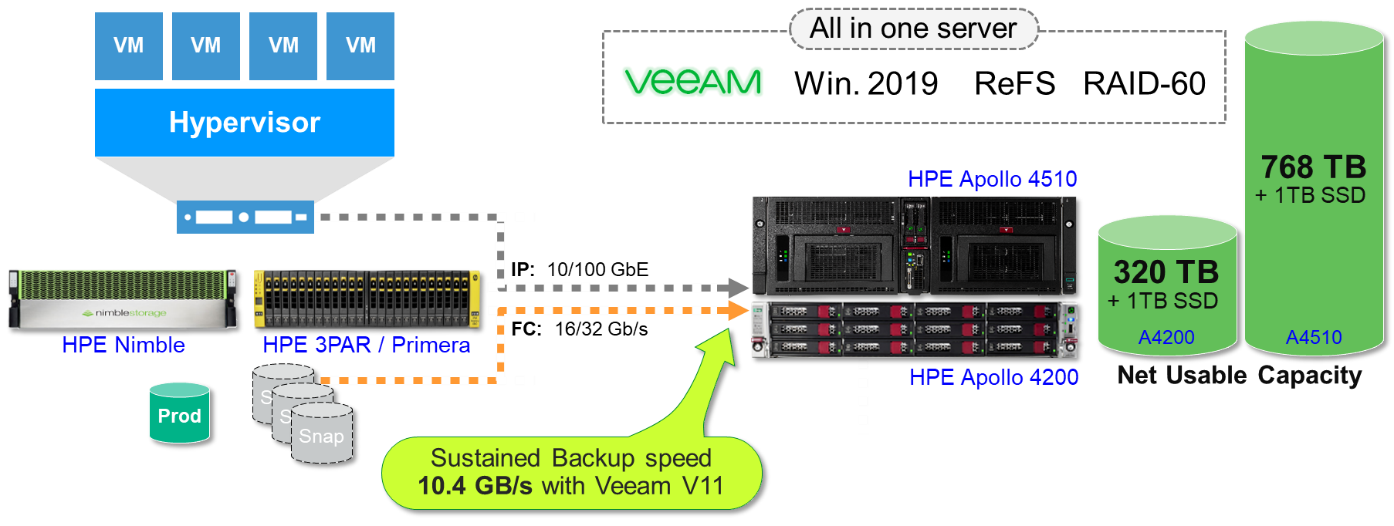

The HPE and Veeam partnership provides comprehensive data protection solutions for hybrid IT. Veeam software integration with HPE primary and secondary storage platforms lets you create a range of solutions for just about every use case. We offer three main architectural approaches for backup target storage: all-in-one, capacity-optimized, and active backup.

Capacity-optimized backup solutions provide fast, reliable data protection and replication in a very small data center footprint. Active backup solutions let you access data to generate new business insights and effectively use backup data for other purposes such as test/dev and analytics.

The all-in-one backup solutions that I focus on in this blog are building blocks that provide balanced compute and storage resources tuned for Veeam v11 workloads. A number of exciting new features improve our HPE Storage and Veeam all-in-one solutions:

- As lab tests at HPE have proven, the peak speed of over 44 TB/h is more than twice the fastest speed ever seen in this type of solution.

- The solution is not based on expensive components such as NVMe or SSDs, but on cost-effective, large 16TB HDDs.

- A single unit is RAID 60 protected and scalable up to 768 TB (net usable). Multiple units can be combined for larger capacity and performance.

These solutions are based on legendary HPE ProLiant reliability to support all the main components of the Veeam Data Protection Suite:

- Backup server

- SQL server DB

- Proxy server

- Backup repository - primary backup target storage

An all-in-one backup solution configuration

An all-in-one backup solution configuration

Test environment

The test environment for the new solution was comprised of the following configuration:

- HPE Apollo 4510 Gen 10 with 2p 32Gb FC and 2p 40 GbE

- 7 ESXi servers on VMware vSphere 6.7 with a few local disks, and 10 GbE network

- 2 HPE 3PAR Storage systems and 1 HPE Nimble Storage system in SAN

Data source

The test data source consisted of 42 VMs, each one with a dataset between 100 GB and 300 GB. To reflect a real production environment, the team created the data with a compression ratio of 2:1. About half of the VMs were on SAN-based volumes across the three storage arrays using the SAN transport mode and the "Backup from Storage Snapshot" integration between Veeam and HPE Storage arrays.

The other VMs were on the internal disks of the 7 ESXi servers. Each ESXi server also had a VM-based Veeam proxy to read data via hot-add, instead of using the slower NBD transport mode.

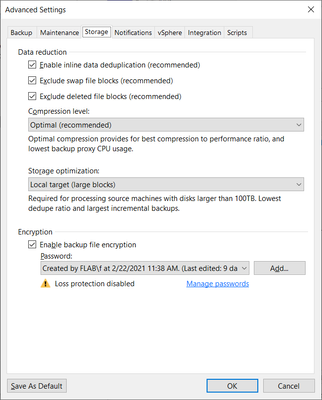

Veeam backup repository

The Veeam backup repository test configuration was based on:

- 1 Scale-Out-Backup-Repository (SOBR) with 2 extents set to "Use per-machine backup files"

- Each extent was on a dedicated ReFS

- Each ReFS was based on an independent RAID 60 volume on a dedicated HPE Smart Array RAID controller.

The above backup repository configuration proved to be the fastest for backup activities. It is possible to make a slightly different configuration based on a single ReFS that does not require the SOBR layer. In that case the 2 RAID 60 volumes would be grouped together in a Windows dynamic volume in stripe mode. That configuration is about 15% slower for backup activities, but grouping more disks on a single volume might deliver more performance for IVMR and granular restore operations. (Note: This alternative configuration is not considered in the present test analysis.)

HPE Apollo system HPE Apollo 4510 Gen 10 system

HPE Apollo 4510 Gen 10 system

- Server model: HPE Apollo 4510 Gen-10 - Storage-optimized server

- CPU: 2 Intel Xeon Gold 6252 CPU @2.1GHz 24 cores

Note, for production we recommend the newer Intel Xeon-Gold 5220R (2.2GHz/24-core) - RAM: 192GB (12 x 16GB DIMM)

- Connectivity:

FC: 2 x 32Gb/s for backup from HPE 3PAR and HPE Nimble Storage snapshots

LAN: 2 x 40GbE (Note: For production environments I would recommend the newer 50/40/10 GbE NIC.) - RAID disk controllers:

2 x HPE Smart Array P408i-p Gen10 for data volumes

HPE Smart Array P408i-a Gen10 for the boot volume and the vPower NFS cache - Data volumes

58x16TB SAS 12G HDD as 2 RAID 60 arrays, one per controller. Each array is based on two RAID 6 parity groups [ (12+2) x 2 + 1 HS)

Strip Size=256k. ReFS with 64KB pages.

Total usable capacity = 768TB - Boot Volume

One volume based on 2 x 0.8 TB SATA SSD in RAID 1 (parity). Note: for production we recommend 2 HPE 800GB SAS 12G SSDs

NTFS boot and OS volume. Capacity usable also for Veeam vPower-NFS cache.

Total usable capacity = 800GB - OS:

Windows 2019 Server

Backup job execution with screenshots

Screenshot 1 displays the backup job storage parameters. Please note that encryption was active despite the additional CPU workload that it produced.

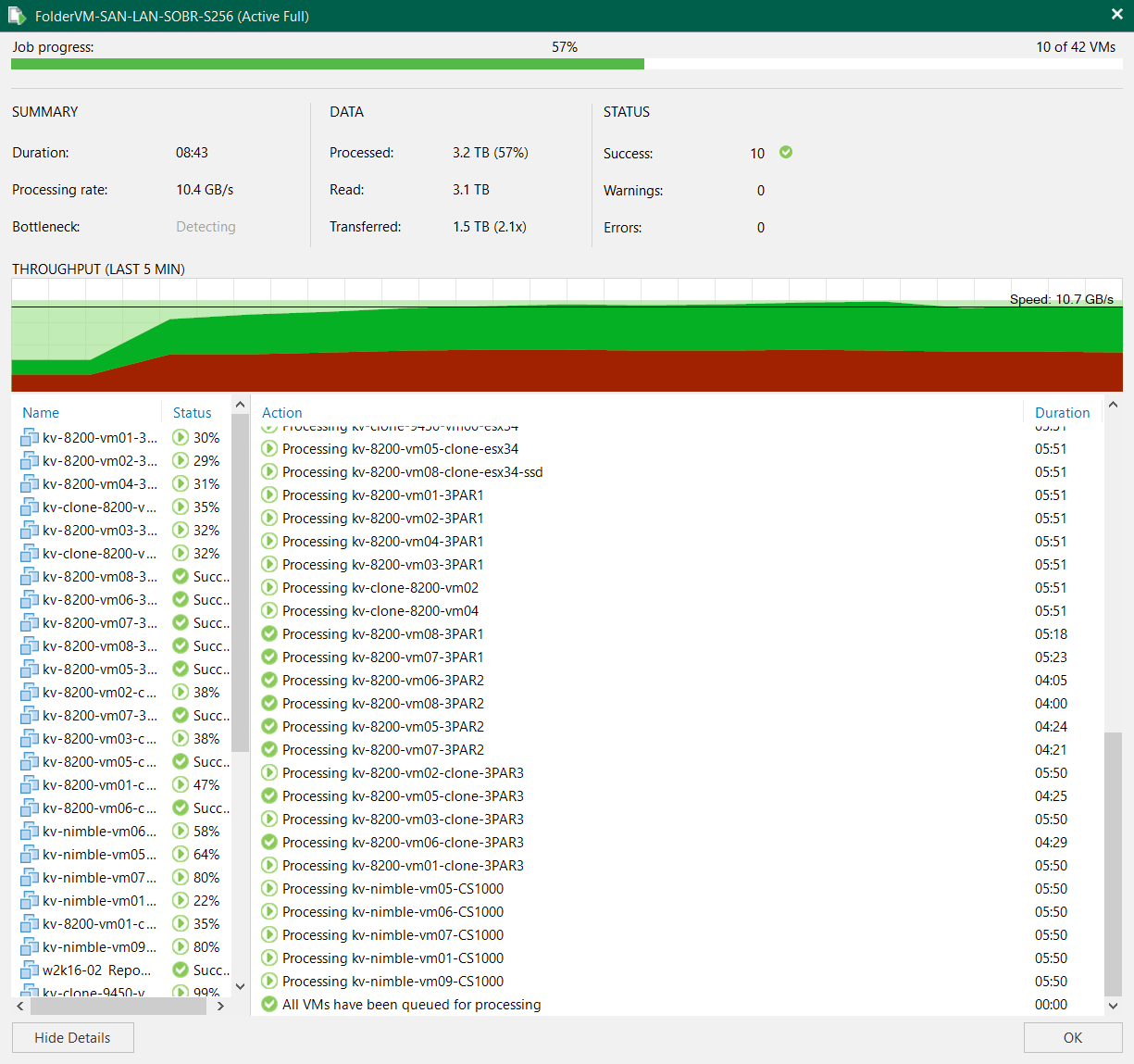

Screenshot 2, taken while the backup was in progress, highlights the average throughput. During the test, full backup performance remained above 10.4 GB/s for the first half of the job, with a peak at the impressive speed of 11.5 GB/s. Note that the 10.4GB/s average speed is negatively weighted by the initial ramp-up. With a larger dataset that number would have been closer to the peak number.

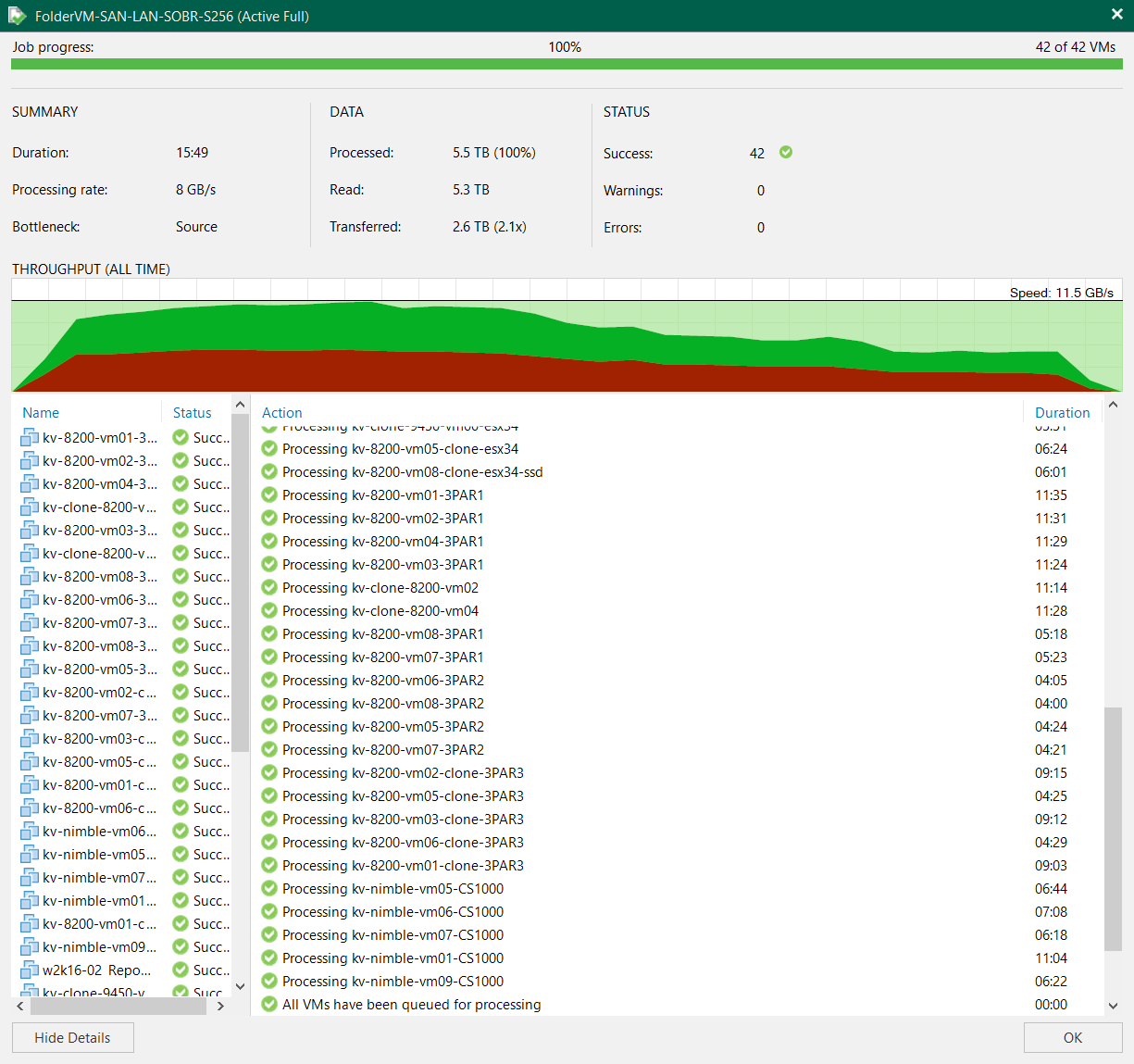

In the second part of the job (screenshot 3), you can see that the throughput progressively decreased as the individual VM processes finished, because the remaining data sources didn’t have enough performance to adequately feed the backup job.

In a real environment, a backup job would have hundreds of VMs to protect and an active pool of about 30 to 50 processes. As soon as one process completed, a new one would automatically be started. This way, throughput in a real environment would remain high until the end of the job.

Unlike Veeam v10 and previous releases, the v11 release intelligently avoids using the Windows FS buffer cache in RAM. For this reason, the backup throughput of this test does not include any temporary use of a RAM buffer. It is real, steady, and sustainable throughput with data going directly to disks.

System workload during the job execution

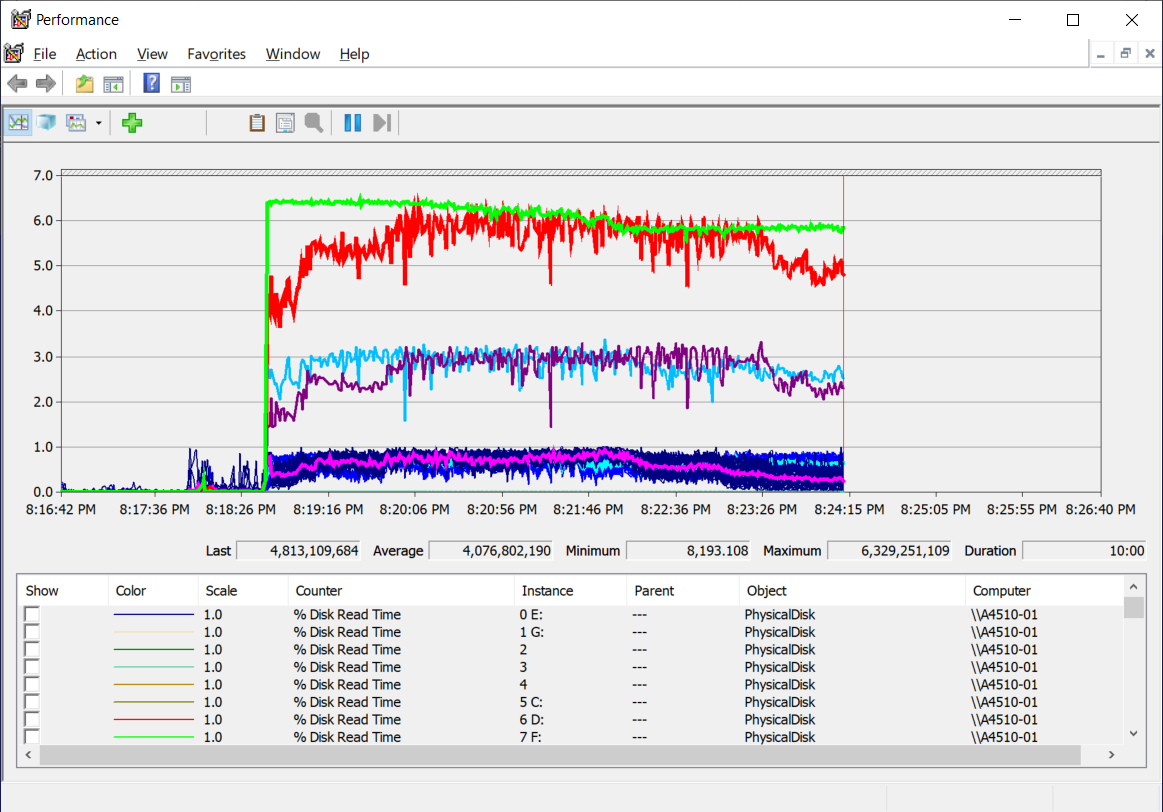

The next series of screenshots demonstrate how the different system components reacted to the Veeam backup workload. The screenshots have been taken at roughly the same time as Screenshot 2. Note that the performance monitor adopts the decimal notation with 1GB=10^9 and not 2^30 as in the Veeam GUI. For this reason, numbers seem 7% bigger.

Screenshot 4 displays the disk and CPU workload.

- The green line indicates physical read from SAN. It shows the backup data that arrived from the storage snapshots across the two FC ports at 32Gb/s. As you can see, the throughput is limited by the maximum throughput of the FC ports. This data arrives from two HPE 3PAR Storage systems and one HPE Nimble Storage system. Note that the test system receives data not only from SAN (green) but also from LAN (Screenshot 5).

- The red line indicates physical write in GB/s. It shows the backup data written to disks after Veeam’s data reduction (compression and deduplication). As visible in Screenshot 2, the data reduction is currently 2:1. Note that this is the total backup data written to disks and coming from SAN and NAS channels.

- The purple and azure lines indicate physical write in GB/s to each of the two ReFS.

- The pink and cyan lines indicate average CPU busy time percentage.

The single cores are represented by the many overlapping lines darker blue lines. [Note: Previous test results, not included here, demonstrate that by disabling job encryption, the resulting CPU load is about 20% lower.]

Screenshot 5 shows the physical LAN throughput. As described above, half of the backup data arrives from SAN and half from LAN. The data arriving from LAN is pre-compressed (2:1) by the VM-based proxies. The test system has two 40GbE ports (the newer configs are based on 50/40/10 ports) configured in teaming LACP mode.

- The red line indicates physical LAN-1 received data

- The pink line indicates physical LAN-2 received data

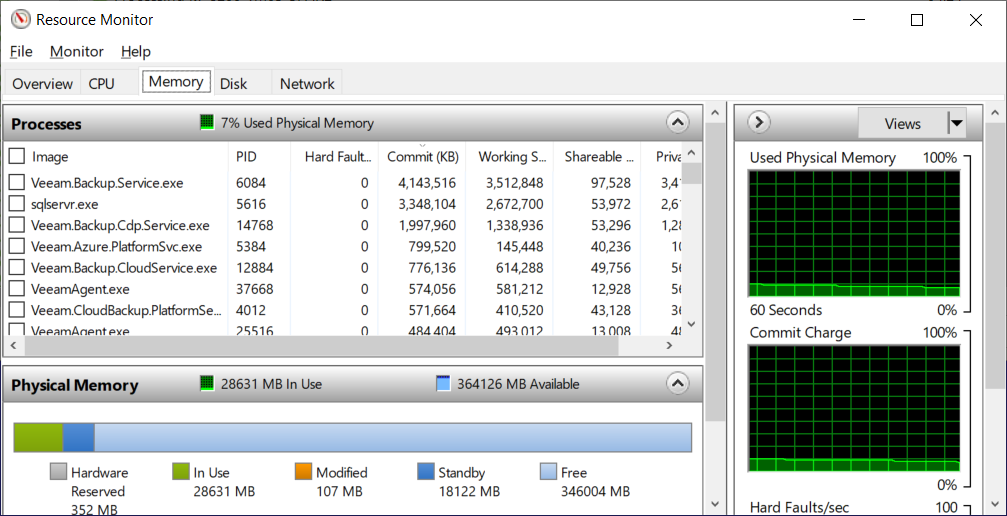

And finally, screenshot 6 captures the system memory (RAM) utilization. Note that the test system has more memory (384 GB) than the recommended configuration (192 GB). As you can see, the new Veeam v11 solution is very efficient when it comes to memory utilization. In comparison, an equivalent screenshot taken during a backup with v10 would have shown a wide orange segment (FS buffer) that consumed almost 50% of the available RAM.

In conclusion

HPE lab tests clearly demonstrate that this HPE Apollo 4510 configuration with Veeam Backup and Replication v11 is extremely fast. The 100% acceleration in performance from just one release upgrade (v10 to v11) is something unusual in the IT industry and very exciting, especially considering that the previous release was already fast.

As usual, big improvements are the result of a persistent and enduring effort. Credits go to the Veeam engineering team for the deep optimization they have introduced with their new code, but the teamwork goes back much further. In the past two years HPE has been working with Veeam, testing multiple hardware configurations and multiple official and pre-production software releases, including "secret" Veeam-engineering-reserved registry keys – all with the goal of removing bottlenecks and improving efficiency in our combined software and hardware integration.

As these test results prove, the team accomplished their goals.

Learn more about the recent Veeam v11 release in this podcast from HPE Storage and Veeam.

Find more solutions from HPE and Veeam at www.hpe.com/partners/veeam

Storage Experts

Hewlett Packard Enterprise

- Back to Blog

- Newer Article

- Older Article

- haniff on: High-performance, low-latency networks for edge an...

- StorageExperts on: Configure vSphere Metro Storage Cluster with HPE N...

- haniff on: Need for speed and efficiency from high performanc...

- haniff on: Efficient networking for HPE’s Alletra cloud-nativ...

- CalvinZito on: What’s new in HPE SimpliVity 4.1.0

- MichaelMattsson on: HPE CSI Driver for Kubernetes v1.4.0 with expanded...

- StorageExperts on: HPE Nimble Storage dHCI Intelligent 1-Click Update...

- ORielly on: Power Loss at the Edge? Protect Your Data with New...

- viraj h on: HPE Primera Storage celebrates one year!

- Ron Dharma on: Introducing Language Bindings for HPE SimpliVity R...